Blind Writes in Distributed Systems

Balancing High-Throughput Performance with Data Consistency

A Blind Write is a specific type of database operation where a transaction updates a piece of data without reading its current value first.

In simple terms, it is a “overwrite” command rather than a “calculate and update” command. While this sounds simple, blind writes create complex edge cases in database concurrency control and consistency models.

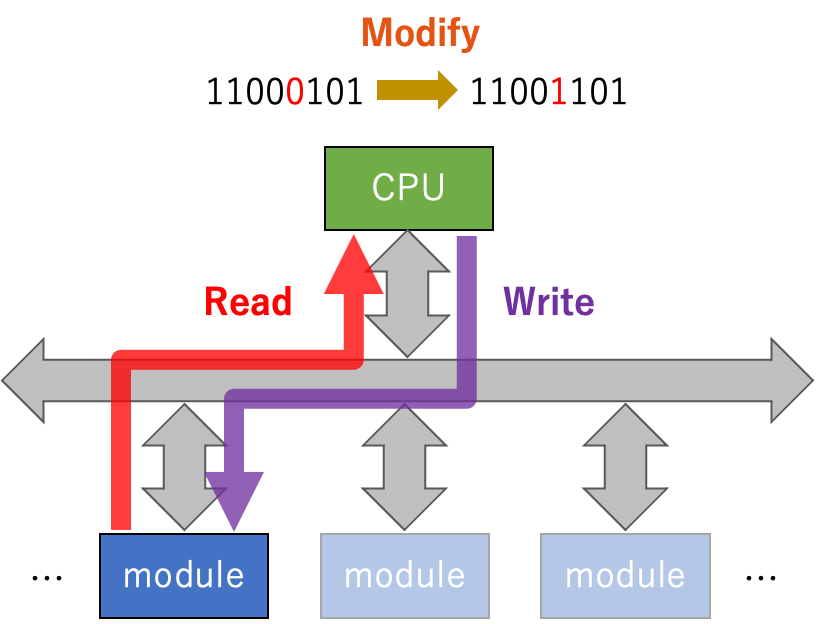

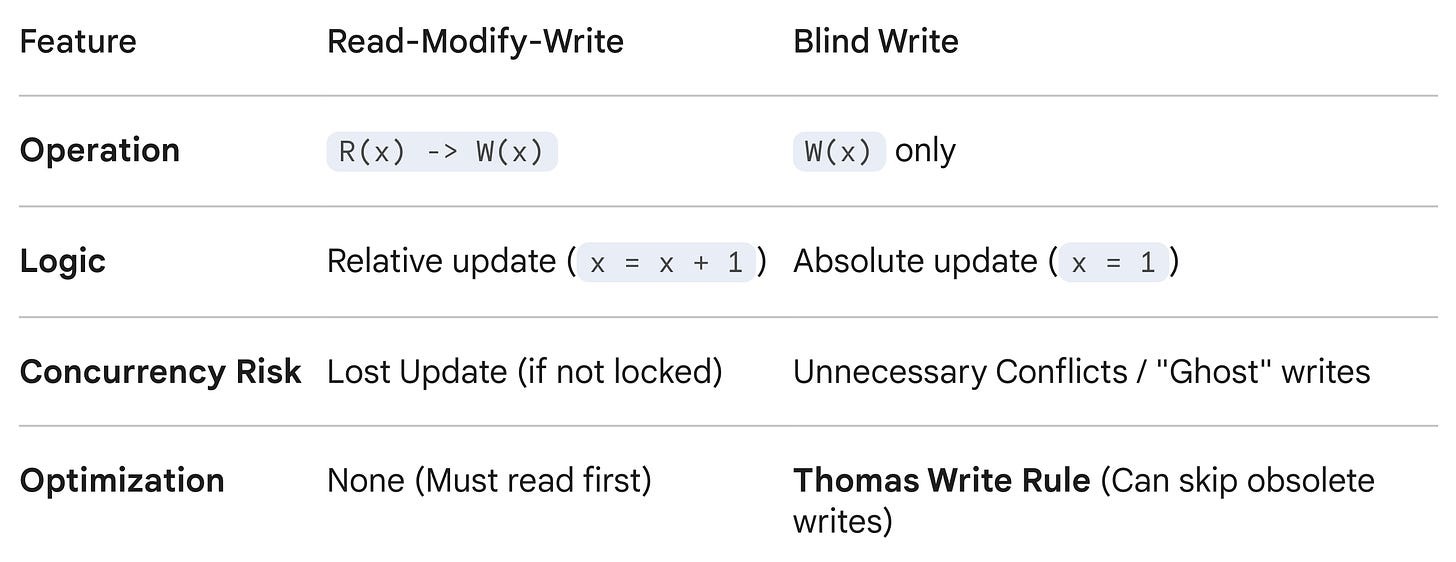

1. The Mechanics: Blind Write vs. Read-Modify-Write

To understand a blind write, you must contrast it with the standard way applications modify data.

Read-Modify-Write (The Standard)

Most updates depend on the current state of the data.

Logic: “Read the current bank balance, add $10, write the new balance.”

SQL:

UPDATE accounts SET balance = balance + 10 WHERE id = 1;Dependency: You must know the previous value to determine the new value.

Blind Write (The Overwrite)

The new value is completely independent of the old value. The transaction does not care what is currently in the database cell; it simply stomps on it.

Logic: “Set the account status to ‘Active’. I don’t care if it was ‘Pending’, ‘Banned’, or already ‘Active’.”

SQL:

UPDATE accounts SET status = 'Active' WHERE id = 1;Dependency: None.

If a read r(X) of a resource X does not occur before a write w(X) to that resource in a transaction, then w(X) is blind write

2. Why Blind Writes Matter: The “Useless Write” Paradox

Blind writes are fascinating in database theory because they challenge the rules of Serializability (the guarantee that parallel transactions run safely).

In strict concurrency models, the database assumes every write is precious. However, blind writes can create situations where a write is technically “useless” but still causes conflicts.

The Scenario

Imagine three transactions running on the same data item x:

T1 writes

x = 5(Blind Write).T2 writes

x = 10(Blind Write).T3 reads

x.

If T2 runs after T1 but before T3, the value 5 written by T1 is never read by anyone. It is written and immediately overwritten. In theory, T1 could have been skipped entirely, and the final state of the database (and what T3 sees) would be exactly the same.

This leads to two major implications in advanced database systems:

A. View Serializability

Blind writes are the reason we have the distinction between Conflict Serializability (strict) and View Serializability (looser).

Conflict Serializability would say T1 and T2 conflict because they both write to

x. It demands a strict ordering.View Serializability recognizes that T1’s write is a “blind write” that nobody ever saw. Therefore, it permits schedules that Conflict Serializability would reject, potentially allowing for higher concurrency.

Conflict Serializability treats

Write(T1)andWrite(T2)as a conflict that must be ordered. View Serializability realizes that ifWrite(T1)is essentially “useless” (blind written and never read before being overwritten), the schedule is valid even if the order looks weird.

𝐋𝐞𝐚𝐫𝐧 𝐭𝐨 𝐛𝐮𝐢𝐥𝐝 𝐆𝐢𝐭, 𝐃𝐨𝐜𝐤𝐞𝐫, 𝐑𝐞𝐝𝐢𝐬, 𝐇𝐓𝐓𝐏 𝐬𝐞𝐫𝐯𝐞𝐫𝐬, 𝐚𝐧𝐝 𝐜𝐨𝐦𝐩𝐢𝐥𝐞𝐫𝐬, 𝐟𝐫𝐨𝐦 𝐬𝐜𝐫𝐚𝐭𝐜𝐡. Get 40% OFF CodeCrafters: https://app.codecrafters.io/join?via=the-coding-gopher

B. The Thomas Write Rule

In distributed databases (like those using Timestamp Ordering), blind writes allow for a powerful optimization called the Thomas Write Rule.

The Rule: If a transaction tries to write to

x, but finds that a newer transaction has already written tox, the older transaction can usually be ignored.Why? Because the write is “blind.” Since nobody read the intermediate value (otherwise the timestamps would have caught it), and a newer value is already in place, the late write is obsolete on arrival. The database can safely pretend the write happened and was immediately overwritten.

If a transaction tries to write a value dated “10:00 AM” but finds a value already there dated “10:05 AM,” it can skip the write entirely. This is only safe because the write is a Blind Write. If it were a Read-Modify-Write, the transaction would have to abort and restart because it would be basing its calculation on obsolete data.

3. Implications for Consistency

Blind writes can be dangerous in systems with Eventual Consistency or Weak Consistency.

If you perform a blind write (e.g., SET status = 'Active') on one replica, and another user performs a different blind write (e.g., SET status = 'Archived') on a different replica, the system has no “original value” to compare them against to resolve the conflict.

Since neither transaction read the data first, the database cannot detect that a change occurred between the start and end of the transaction. This often forces databases to use “Last Write Wins” (LWW) resolution, where the update with the marginally later timestamp overwrites the other, potentially leading to lost data updates without the application realizing it.

In distributed databases (like Cassandra or DynamoDB), blind writes are the primary source of “silent” data loss when using Last Write Wins. Since the database doesn’t check the previous state, it blindly accepts the timestamp, which can lead to the “lost update” anomaly if not handled carefully.

Summary Table

TL;DR

A blind write in DBMS occurs when a transaction updates a data item (W(X)) without reading its current value (R(X)) first. It is a “write-only” operation used to improve performance in high-load systems by avoiding necessary locks. While beneficial for speed, blind writes can cause data inconsistency or, when used with view serializability, allow schedules not possible under conflict serializability