Consistent Hashing 101

The "one ring to rule them all" approach

How a simple mathematical ring prevents catastrophic cache misses in distributed systems.

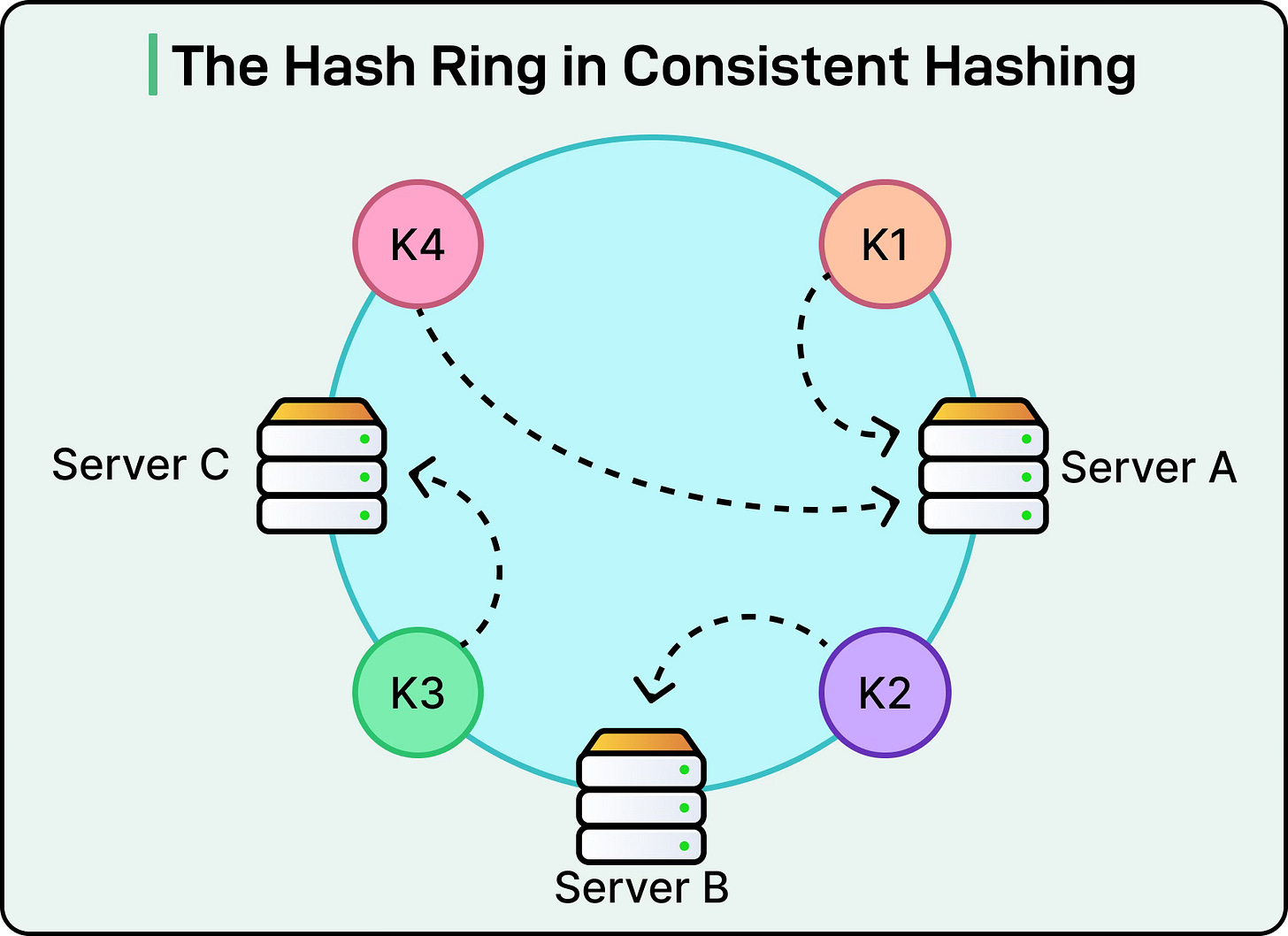

Imagine you are building a massive application. To handle the traffic, you deploy three cache servers (Server A, B, and C) to store user sessions. When a request comes in for user:99, how do you know which server holds that data?

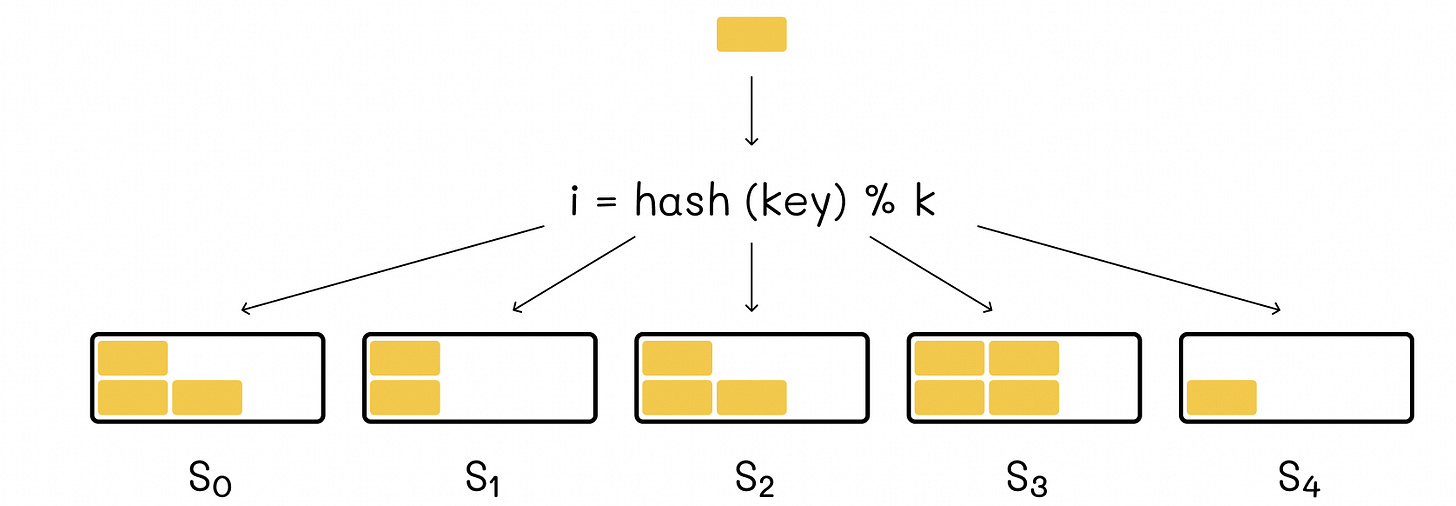

The standard, naive approach is to use the modulo operator. You hash the user ID to get a number, and then find the remainder when divided by the number of servers: hash("user:99") % 3.

This works flawlessly—until your application goes viral.

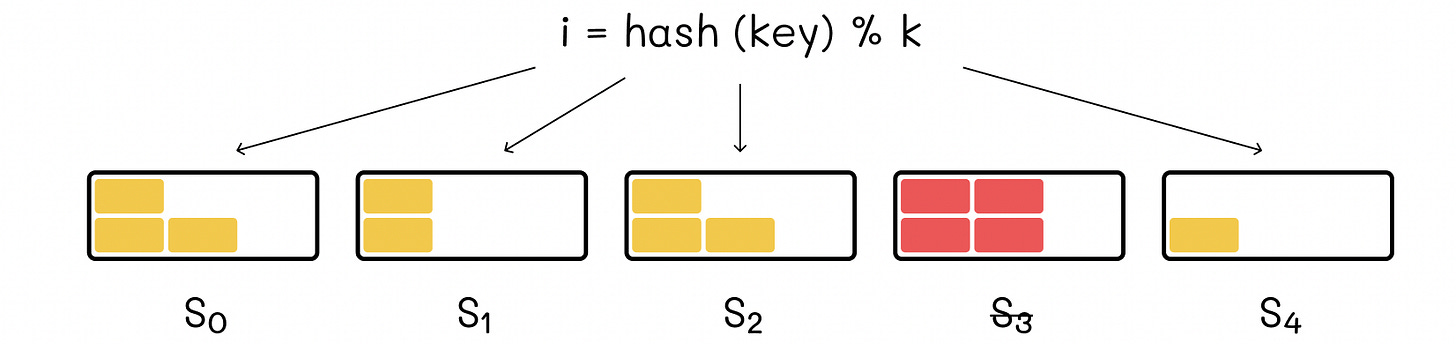

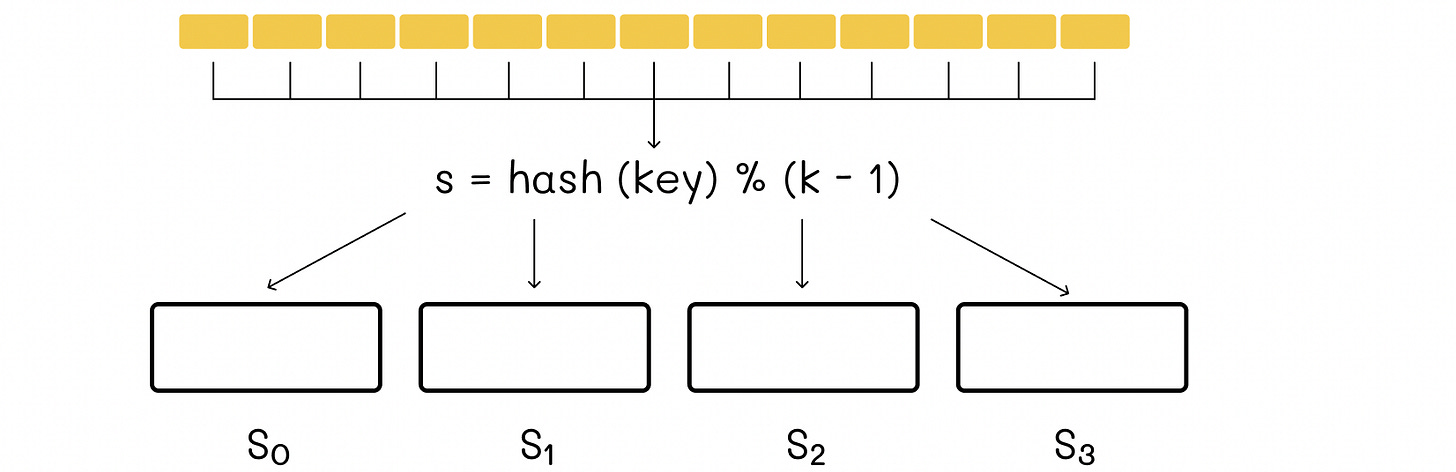

Suddenly, you need to add a fourth server to handle the load. Your formula is now hash("user:99") % 4. Because the denominator changed, almost every single key in your system now mathematically maps to a completely different server.

The result? A remapping catastrophe. You experience a 75% cache miss rate, all your requests bypass the cache, they hit your primary database simultaneously, and your entire system crashes.

Remapping catastrophe refers to the massive, system-wide data migration that occurs when traditional modulo-based hashing is used in distributed systems with a changing number of servers. When the server count (

n) changes in a standard modulo approach (hash(key) % n), nearly all keys are assigned to new nodes, causing cache misses, database overloading, and potential system failure.

Enter Consistent Hashing.

What is Consistent Hashing?

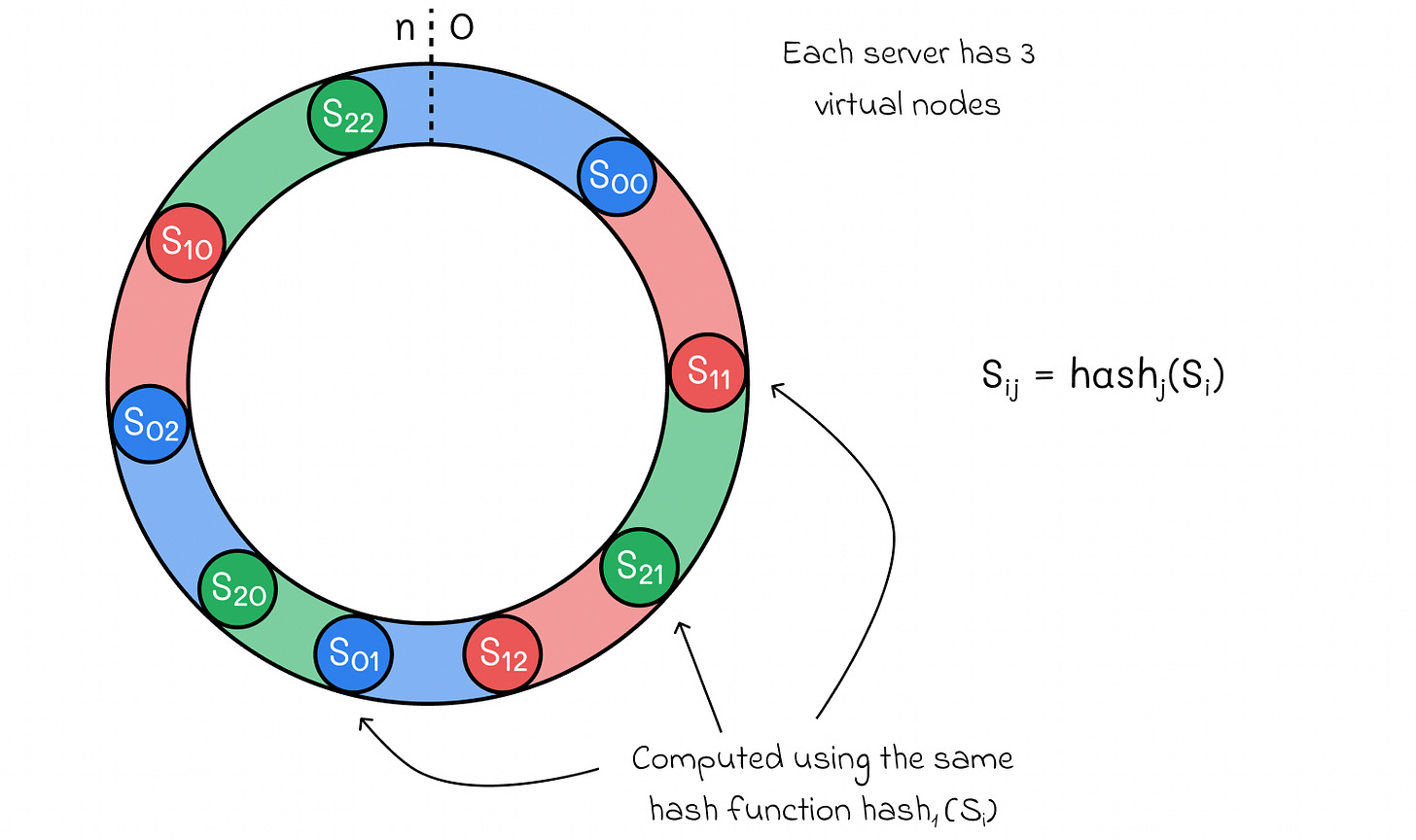

Originally introduced in an academic paper by MIT researchers in 1997, consistent hashing is a distributed routing algorithm that solves the remapping problem. Instead of relying on the total number of servers to determine data placement, it maps both the servers and the data onto a fixed mathematical geometry: The Hash Ring.

Step 1: Building the Ring

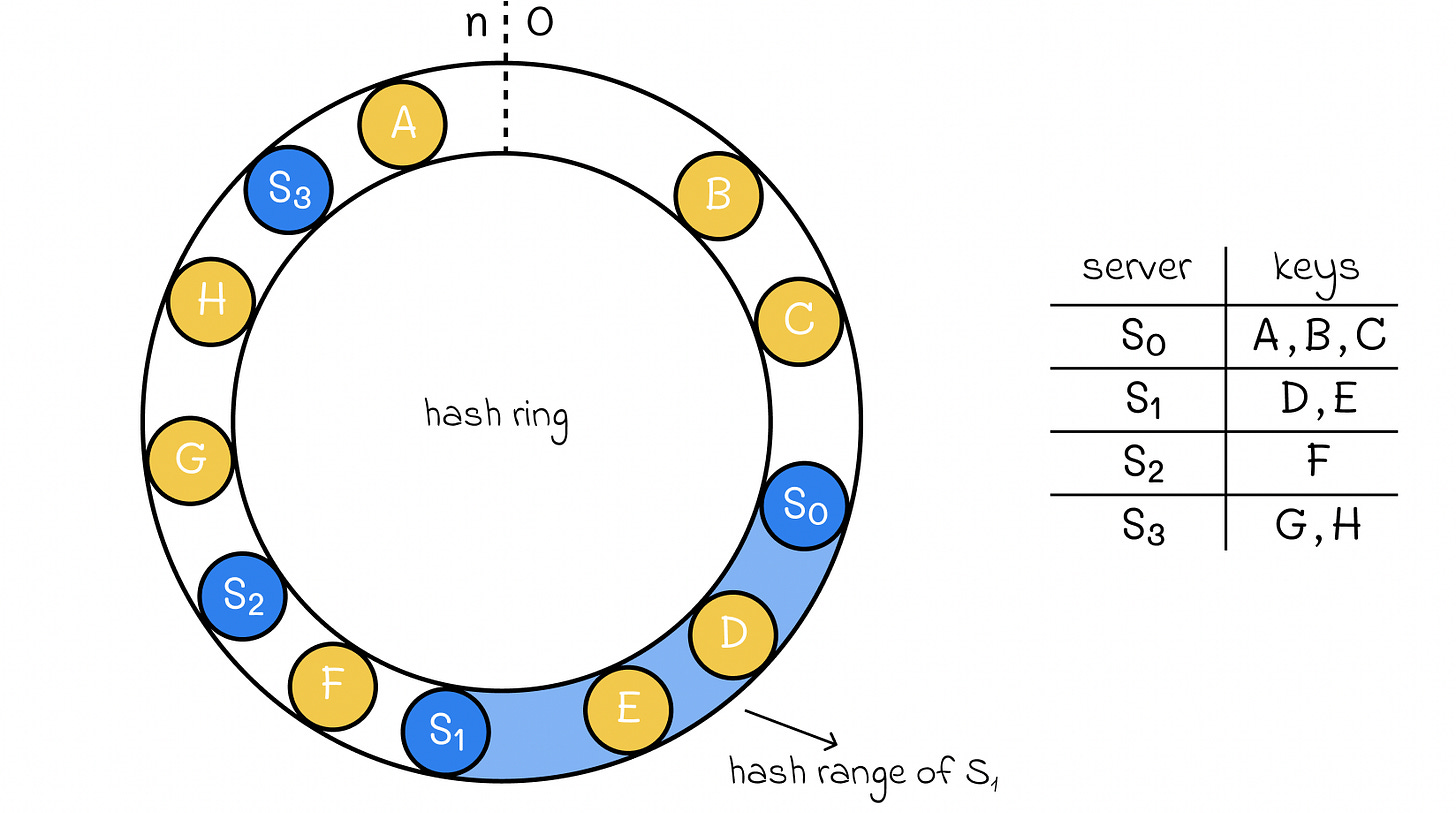

Imagine a circle. We take a standard hash function (like SHA-1 or MD5) that outputs a massive range of numbers, typically 0 to 232 - 1. We map this entire range onto the edge of the circle. The top is 0, and as you move clockwise, the numbers increase until you wrap back around to the top.

Step 2: Placing the Servers

Next, we hash the IP addresses or hostnames of our cache servers (e.g., hash("192.168.1.1")). This outputs a specific number on the ring. We place Server A, Server B, and Server C at their corresponding coordinates on this circle.

Step 3: Routing the Data

When a request comes in for user:99, we hash that key. It lands somewhere on the edge of the ring. To find which server holds the data, the algorithm simply moves clockwise around the ring from the key’s position until it hits the very first server.

TL;DR. Consistent hashing uses a virtual circular ring to map data keys and server nodes, ensuring that when nodes are added or removed, only

k/nkeys (keys/nodes) require remapping, rather than the entire dataset. Servers and keys are hashed to positions on this ring, with keys assigned to the first node encountered moving clockwise.

The Magic of Scaling

The true brilliance of the hash ring reveals itself when your infrastructure changes.

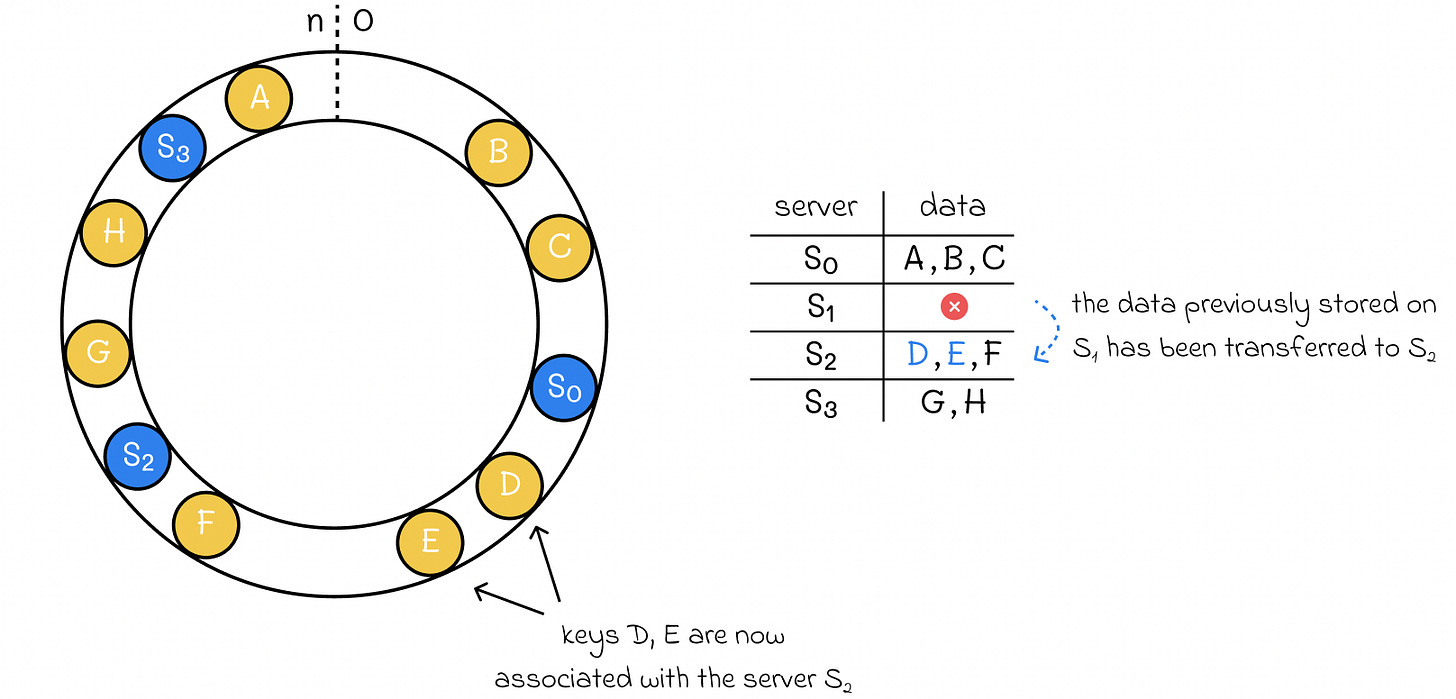

Removing a Server

If Server 1 catches fire and dies, it is removed from the ring.

What happens? The keys that were assigned to Server 1 now simply continue clockwise and land on Server 2. Again, the rest of the cluster doesn’t even notice.

Instead of remapping 75% of your data, consistent hashing ensures that only k/n keys are moved (where k is the total number of keys and N is the number of servers).

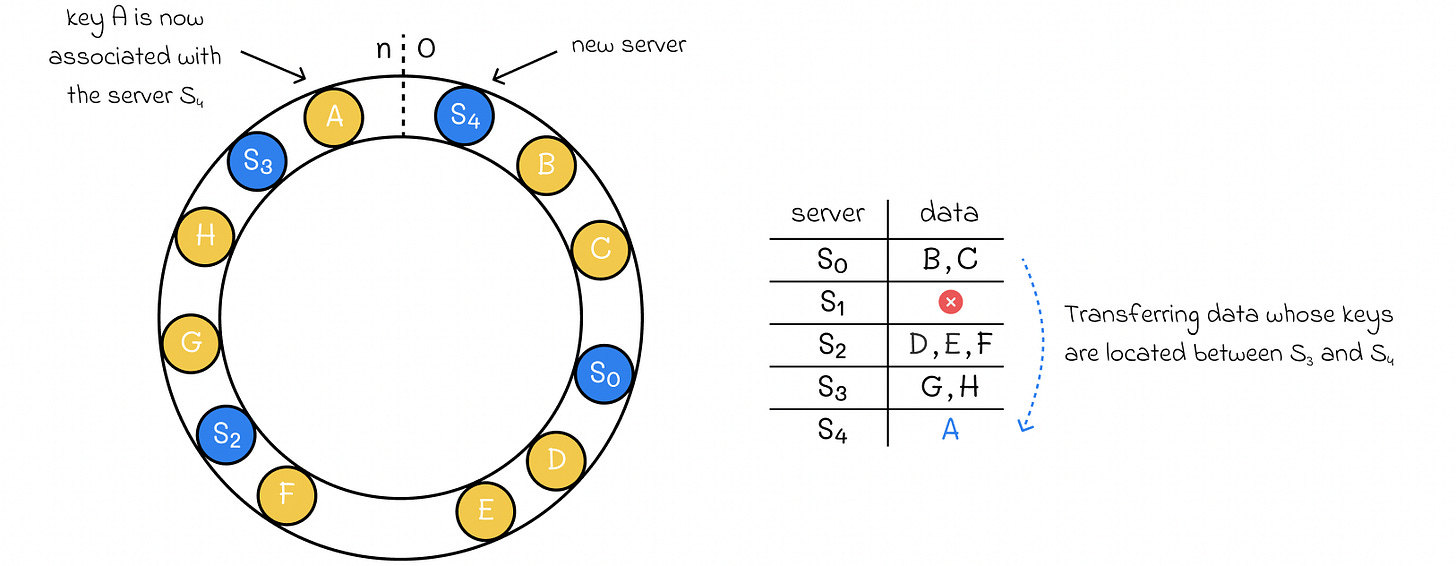

Adding a Server

Let’s say you add Server 4 to handle a traffic spike. Server 4 is hashed and lands between Server 3 and Server 0 on the ring.

What happens? Only the data that falls exactly between Server 3 and Server 4 gets remapped to the new server. All the data routed to Server 3, Server 2, and the rest of Server 0 remains completely untouched.

𝐋𝐞𝐚𝐫𝐧 𝐭𝐨 𝐛𝐮𝐢𝐥𝐝 𝐆𝐢𝐭, 𝐃𝐨𝐜𝐤𝐞𝐫, 𝐑𝐞𝐝𝐢𝐬, 𝐇𝐓𝐓𝐏 𝐬𝐞𝐫𝐯𝐞𝐫𝐬, 𝐚𝐧𝐝 𝐜𝐨𝐦𝐩𝐢𝐥𝐞𝐫𝐬, 𝐟𝐫𝐨𝐦 𝐬𝐜𝐫𝐚𝐭𝐜𝐡. Get 40% OFF CodeCrafters: https://app.codecrafters.io/join?via=the-coding-gopher