Coroutines

When Code Pauses Without Blocking

Introduction

“Concurrency is not parallelism.” — Rob Pike

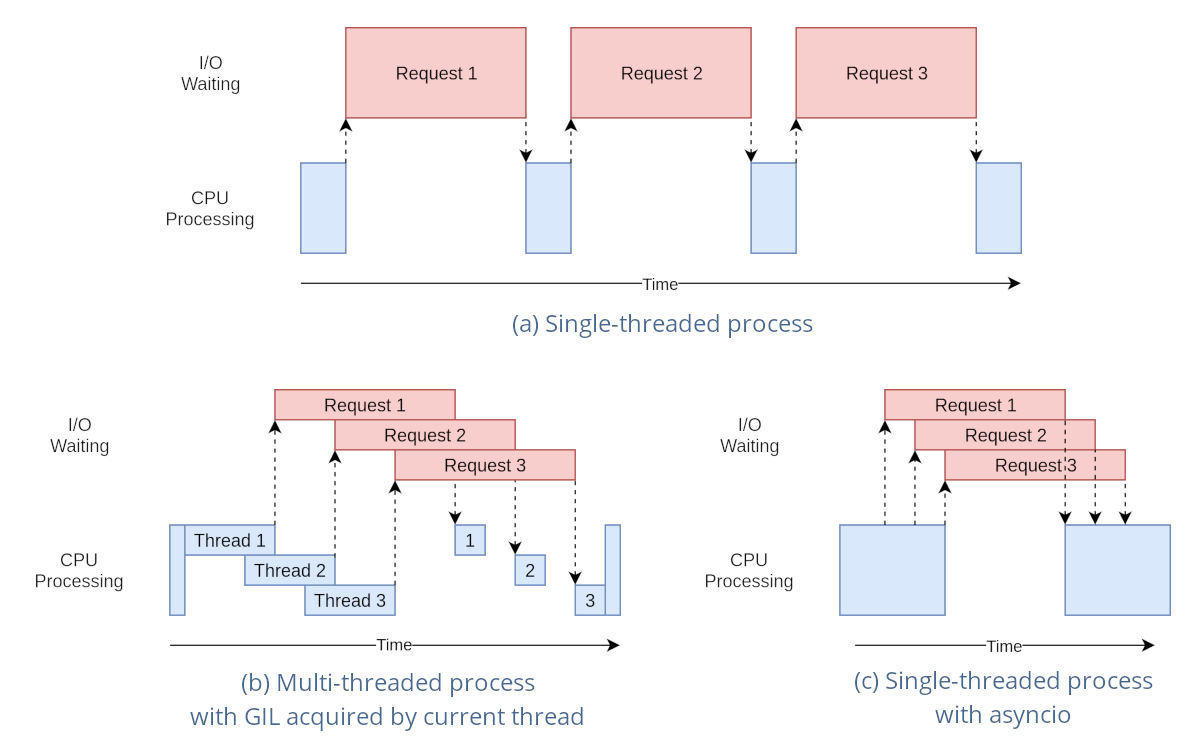

Most real programs do not spend their time computing. They wait. They wait for disks, networks, timers, and other programs. Coroutines exist because blocking a whole thread just to wait is wasteful. They let execution pause at precise points and resume later without giving up clarity or control.

What a Coroutine Is

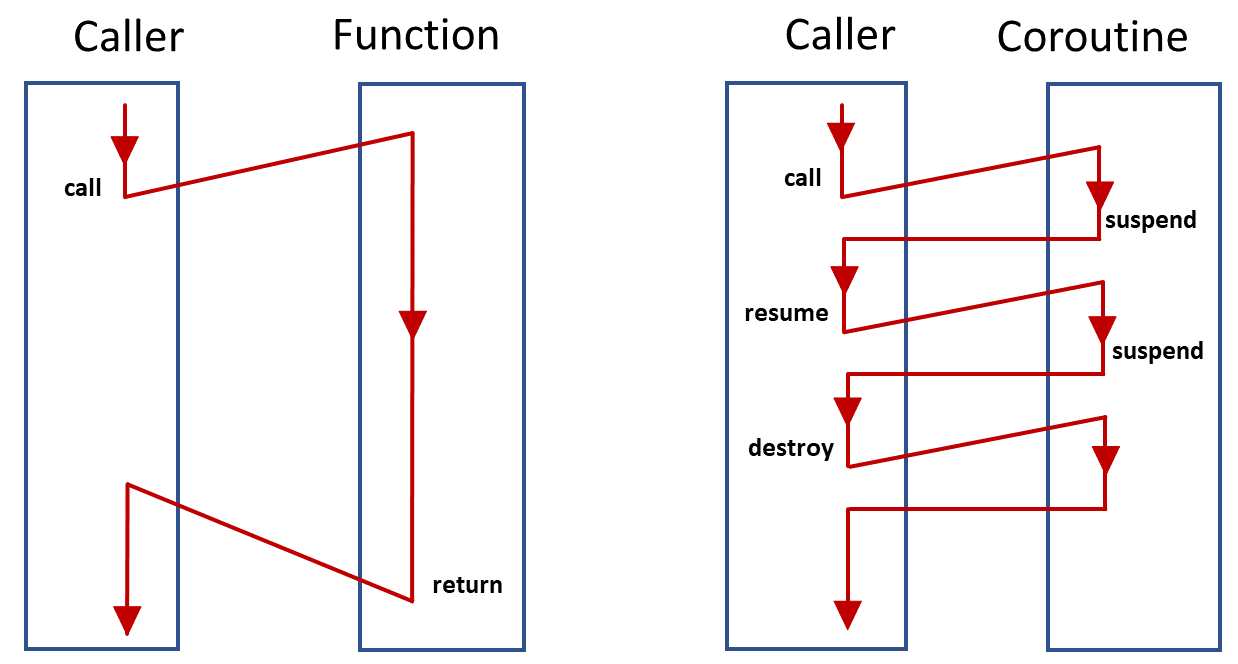

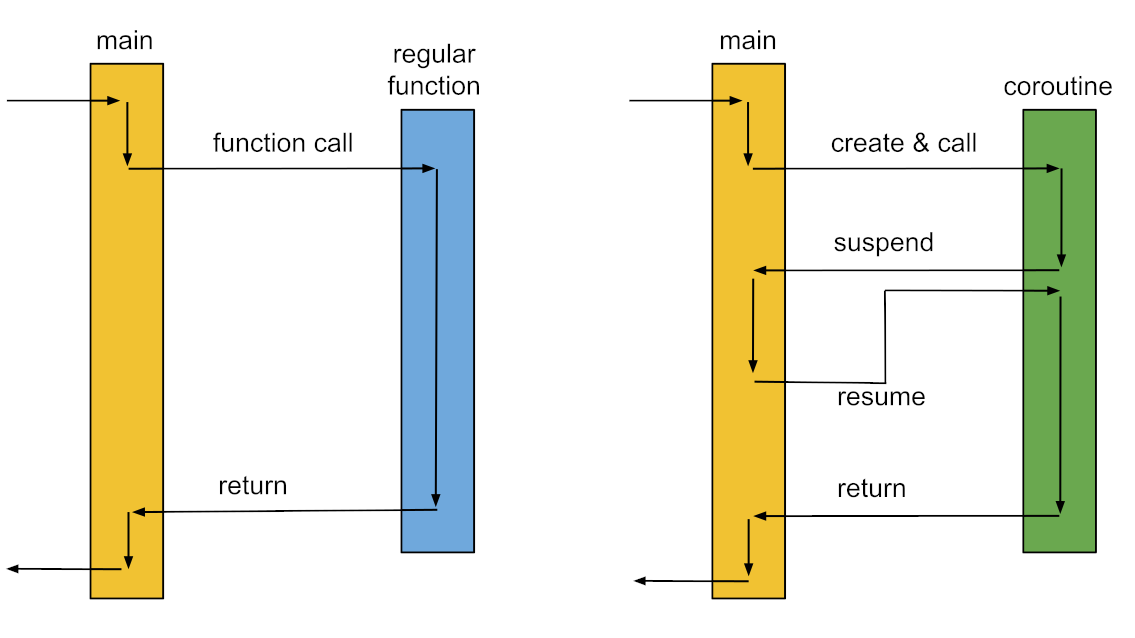

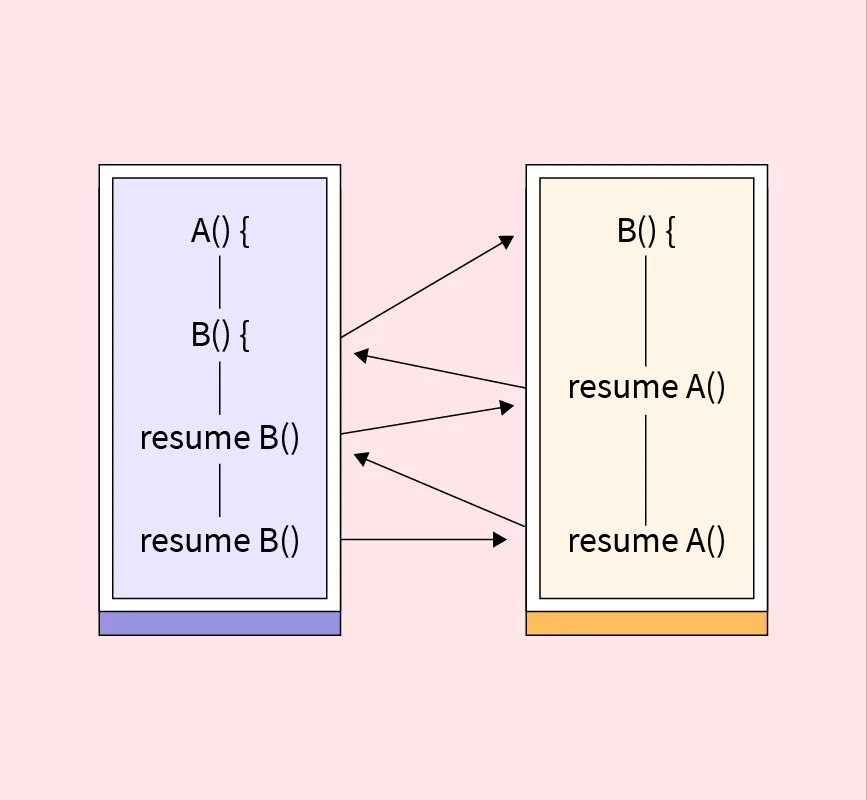

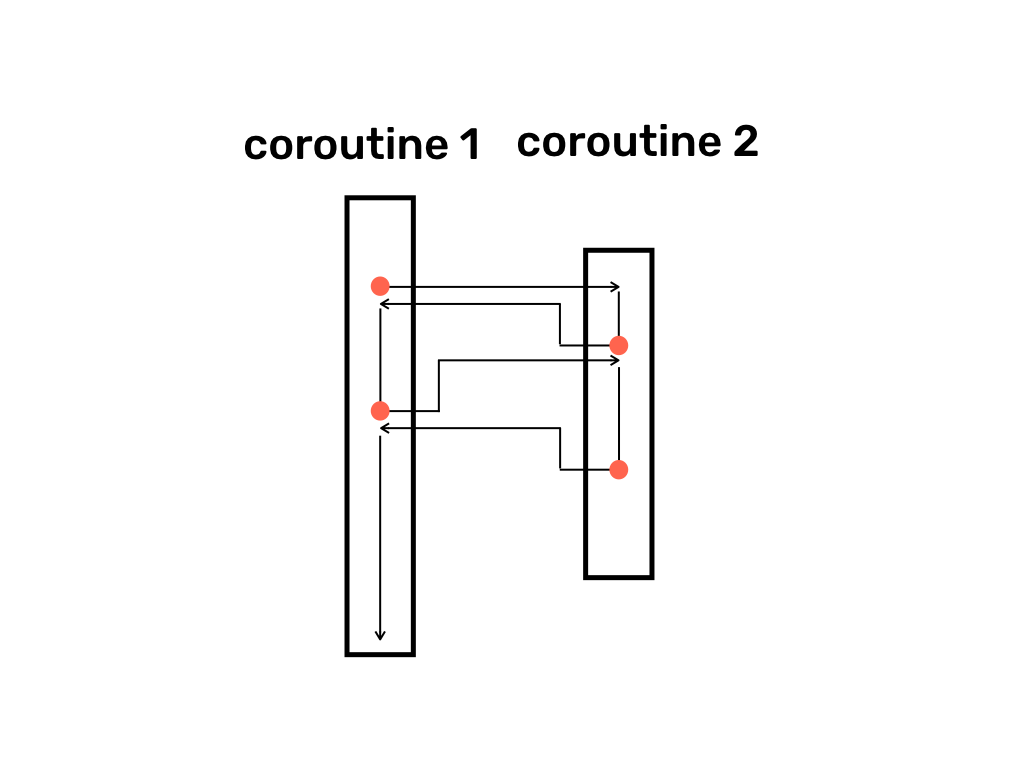

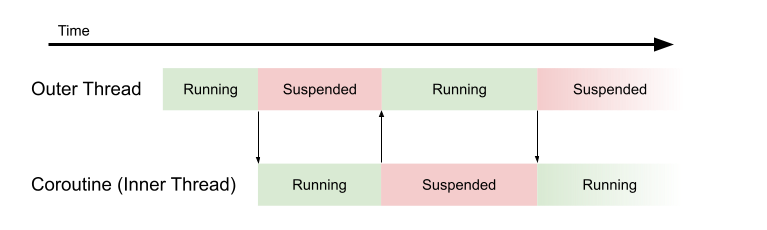

A coroutine is a function that can suspend its execution and later resume from the same point. Unlike a normal function, which runs from start to finish in one call, a coroutine can yield control back to a scheduler and then continue as if nothing happened. Its local variables and execution position are preserved across suspensions.

This makes a coroutine effectively a state machine that you do not have to write by hand. The runtime takes responsibility for saving and restoring state, while the code stays linear and readable.

Coroutines are primarily for concurrency (managing many tasks seemingly at once via suspension/resumption on few threads) but can achieve parallelism (truly simultaneous execution on multiple CPU cores) by being dispatched to different threads, allowing non-blocking I/O and CPU-intensive work to run efficiently without blocking the main thread, unlike traditional threading which is heavier.

Concurrency (Dealing with many things at once)

Definition. Handling multiple tasks over a period, making progress on each, but not necessarily simultaneously.

Coroutines & Concurrency. Coroutines excel here. They are lightweight, suspendable computations that allow you to write asynchronous code that looks sequential. A single thread can run many coroutines, switching between them (context switching) when one suspends (e.g., waiting for data), keeping the thread busy.

Example. A web server handling 100 user requests. Instead of 100 threads, it uses a few coroutines that suspend when waiting for a database response, allowing other coroutines to run.

Parallelism (Doing many things at once)

Definition. Executing multiple tasks at the exact same time, typically requiring multiple CPU cores.

Coroutines & Parallelism. Coroutines provide concurrency, not automatic parallelism. To get parallelism, you must explicitly tell coroutines to run on different threads (using dispatchers like

Dispatchers.DefaultorDispatchers.IO), leveraging multi-core processors for CPU-bound tasks.Example. Calculating 100 hashes. You can launch 100 coroutines, but if they run on one thread, they’re concurrent. To run them in parallel, you’d dispatch them to a thread pool, letting different cores crunch numbers simultaneously.

A Simple Coroutine Example

In Python, coroutines are expressed using async and await. Consider a coroutine that waits for data and then processes it.

import asyncio

async def fetch_data():

print(”waiting for data”)

await asyncio.sleep(1)

print(”data received”)

return 42

async def main():

result = await fetch_data()

print(”result:”, result)

asyncio.run(main())Step-by-step explanation.

import asyncio. This line imports Python’s library for writing concurrent code using theasync/awaitsyntax.async def fetch_data():. This defines a coroutine (an asynchronous function) namedfetch_data.await asyncio.sleep(1). Instead of stopping the program for one second, this line tells the event loop to go work on other tasks (i.e., free to run other coroutines) for 1 second and return to this spot when the time is up. It simulates fetching data from a slow source. No thread was blocked during this time.async def main():. This defines the primary coroutine that manages the flow of the program.result = await fetch_data(). This line calls thefetch_data()coroutine. Theawaitkeyword ensures themaincoroutine pauses untilfetch_data()is complete and returns its value (42).asyncio.run(main()). This is the entry point that starts the asynchronous event loop and runs the top-levelmain()coroutine to completion

Why This Is Different From Blocking

If the same logic were written using a blocking sleep, the entire thread would be idle during the wait. With coroutines, waiting is explicit and cooperative. The coroutine chooses when it is safe to pause, and the runtime schedules other work in the meantime.

This property makes coroutines predictable. Context switches only happen at await points, not at arbitrary instructions. That predictability simplifies reasoning about shared state and reduces subtle concurrency bugs.

Coroutines Versus Threads

Threads are managed by the operating system and can be preempted at almost any instruction. Coroutines are managed in user space and only yield when they decide to. Switching between coroutines involves saving a small amount of state and jumping to another execution context, which is far cheaper than a kernel mediated thread switch.

This difference matters in systems that handle large numbers of concurrent tasks. A server that uses threads for every connection quickly hits limits. A server that uses coroutines can handle many more concurrent clients with the same resources.

In contrast to threads, which are pre-emptively scheduled by the operating system, coroutine switches are cooperative.

Coroutine Scheduling in Practice

Coroutines do not schedule themselves. They rely on a scheduler or event loop. The scheduler tracks which coroutines are ready to run and which are waiting on external events.

Consider this example with multiple coroutines running concurrently.

async def worker(name, delay):

print(f”{name} started”)

await asyncio.sleep(delay)

print(f”{name} finished”)

async def main():

await asyncio.gather(

worker(”A”, 1),

worker(”B”, 2),

worker(”C”, 1),

)

asyncio.run(main())All three workers start immediately. While one coroutine is waiting, others are allowed to run. Even though only one thread may be involved, progress appears concurrent because execution is interleaved at suspension points.

asyncio.gather()is a core utility function in Python’sasynciolibrary used to run multiple awaitable objects (coroutines, tasks, or futures) concurrently and aggregate their results. It allows you to treat a group of asynchronous operations as a single unit and wait for all of them to complete.

Coroutines and State Preservation

One of the most important aspects of coroutines is that local state survives suspension. Variables do not reset. Call stacks do not unwind. This is what allows coroutine code to look like ordinary sequential logic while behaving asynchronously.

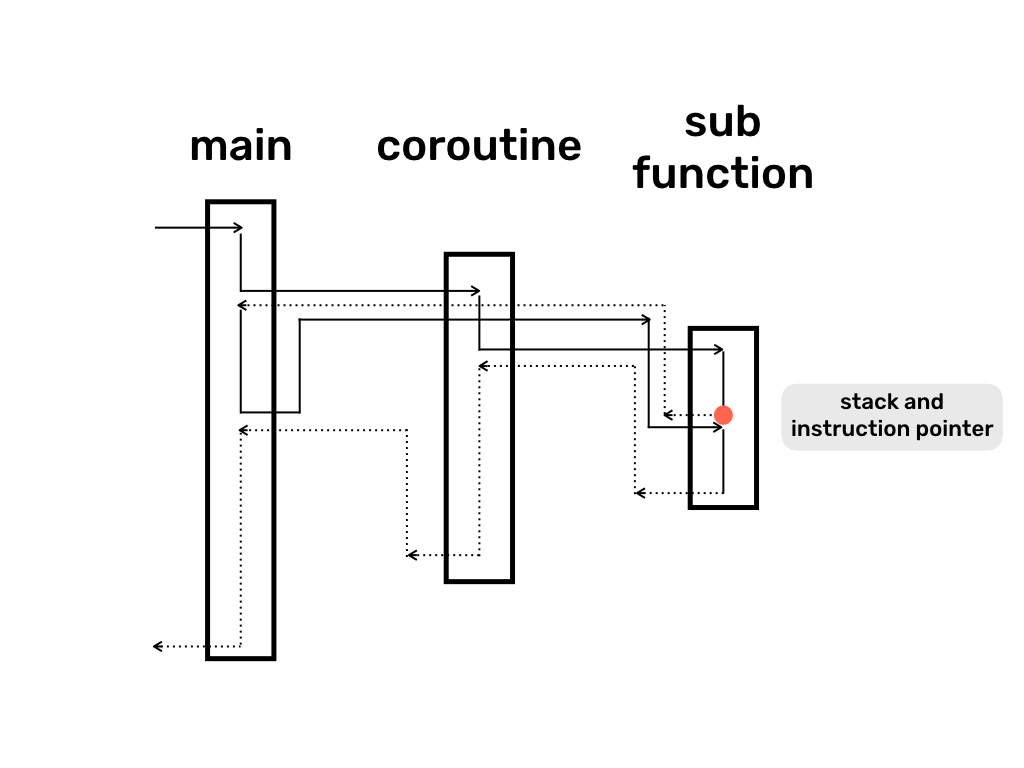

Under the hood, the runtime stores enough information to reconstruct the execution context. This may include the instruction pointer, local variables, and references to awaited operations. The details vary by language, but the abstraction remains consistent.

Async and Await Are Coroutine Syntax

Async and await are not magic keywords. They are structured ways to define suspension points in coroutines. Each await marks a place where execution may pause. The compiler transforms the function into a resumable state machine that the runtime can manage.

Understanding this transformation explains why certain patterns work well and others do not. Long-running CPU work inside a coroutine blocks progress because there is no await where execution can yield.

When Coroutines Should Not Be Used

Coroutines are not a substitute for parallelism. CPU intensive work still requires multiple threads or processes to run on multiple cores. If heavy computation runs inside a coroutine, it blocks every other coroutine on the same thread.

For this reason, many systems use coroutines for coordination and input output, and delegate computation to worker threads or pools.

Why Coroutines Matter

Coroutines change how programs wait. Instead of blocking resources, they suspend execution cleanly and resume only when useful work can be done. This leads to systems that scale better, remain responsive under load, and are easier to reason about than callback driven designs.

Once you understand coroutines, modern async runtimes stop feeling mysterious. You can see exactly when code runs, when it pauses, and why performance behaves the way it does.

coroutines are so cool!