defer: The Go Feature Everyone Uses and Almost No One Understands

Why it used to be slow, how it actually works, and why that matters

“

deferlooks like syntax sugar. It isn’t.”

In Go, defer is one of those features you learn on day one and stop thinking about forever. It’s clean, expressive, and hard to misuse. You open a file, you defer file.Close(), and you move on with your life.

For years, though, experienced Go developers quietly avoided defer in hot paths. Not because it was unsafe — but because it was surprisingly expensive. That performance reputation wasn’t superstition. It was a direct consequence of how defer was implemented inside the runtime.

Understanding why explains a lot about Go’s design philosophy.

What defer promises

At the language level, defer is simple: it schedules a function call to run after the surrounding function returns, regardless of how it exits.

func example() {

defer fmt.Println("world")

fmt.Println("hello")

}This prints:

hello

worldWhat’s less obvious is that defer also captures arguments at the point where defer is executed, not when the function returns.

for i := 0; i < 3; i++ {

defer fmt.Println(i)

}This prints:

2

1

0That single detail already hints that defer is doing real work at runtime.

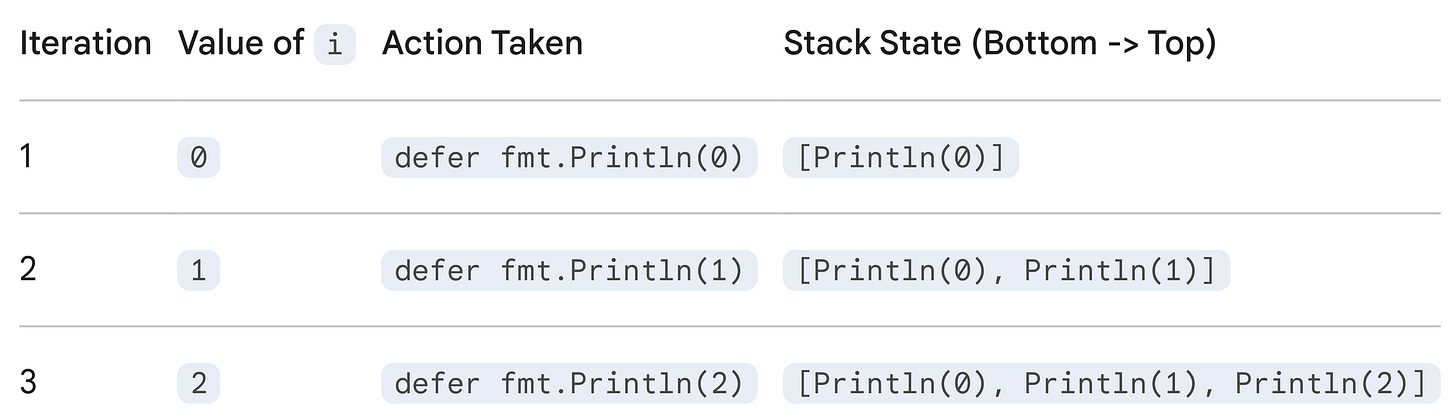

This behavior happens because of two specific rules in Go regarding defer: Immediate Argument Evaluation and Last-In, First-Out (LIFO) Execution.

Here is the breakdown of exactly what is happening under the hood.

1. Argument Evaluation (The “Snapshot”)

defer captures the arguments immediately.

When the defer statement is hit during the loop, Go evaluates the current value of i right then and there. It freezes that value and stores it alongside the function call. It does not wait to see what i will be at the end of the function.

Loop 1 (i=0): Go schedules “Print 0”.

Loop 2 (i=1): Go schedules “Print 1”.

Loop 3 (i=2): Go schedules “Print 2”.

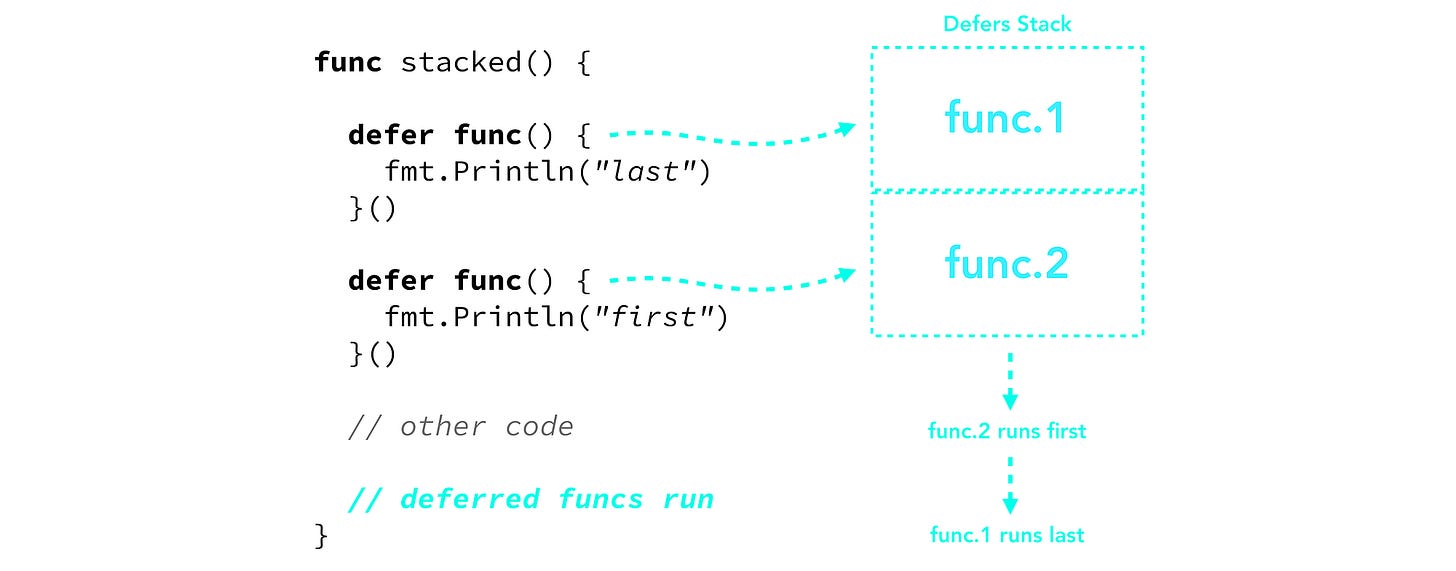

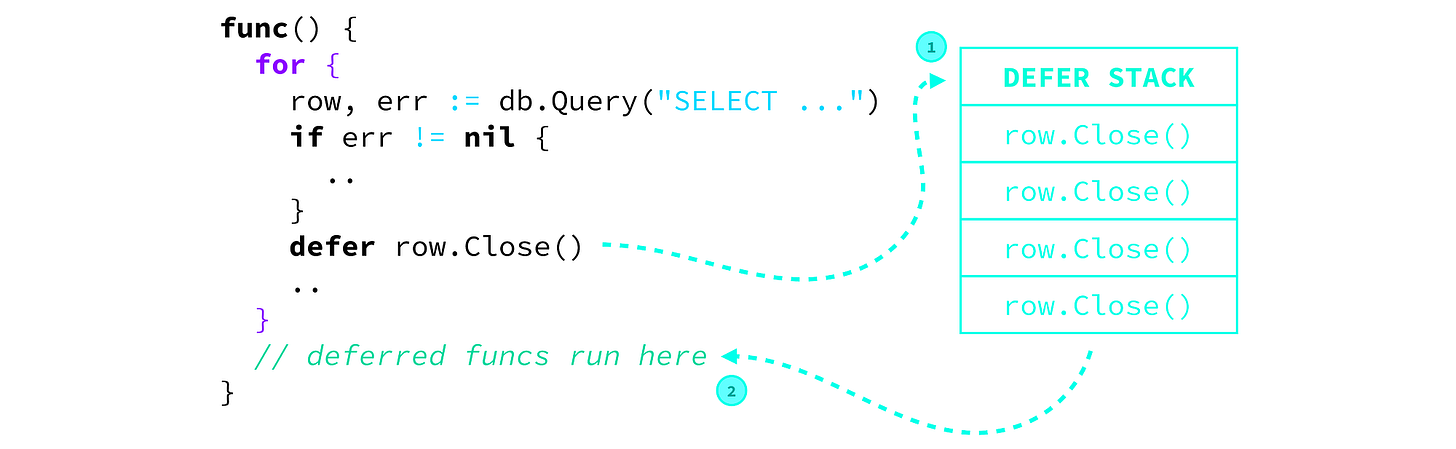

2. The Stack (Last-In, First-Out)

Deferred function calls are pushed onto a stack. When the surrounding function returns, the stack pops the calls off one by one. This means the last function deferred is the first one to execute.

After the Loop (Popping from Stack)

Once the function finishes, Go processes the stack from top to bottom:

Pop Top: Run

fmt.Println(2)⮕ Prints 2Pop Next: Run

fmt.Println(1)⮕ Prints 1Pop Last: Run

fmt.Println(0)⮕ Prints 0

Common Pitfall. Closures

The reason this distinction is so important is that it behaves differently if you use a closure (an anonymous function) without passing i as an argument.

If you had written this instead:

for i := 0; i < 3; i++ {

defer func() {

fmt.Println(i) // 'i' is NOT captured here; it's a reference to

// the variable

}()

}This would print 3 three times. Because the closure refers to the variable i, not the value of i at that moment. By the time the deferred functions run, the loop has finished and i has become 3.

How defer worked originally

Before Go 1.14, defer was implemented using a runtime-managed defer stack.

Every time the program hit a defer statement, the runtime would:

Allocate a small defer record

Store the function pointer and arguments

Push it onto a linked list associated with the current goroutine

When the function returned, the runtime would pop that list and execute each deferred call in last-in-first-out order.

Conceptually, it looked like this:

goroutine

└── defer list

├── defer #3

├── defer #2

└── defer #1This worked beautifully — and consistently — but it had costs.

Plagiarizing from the Go 1.14 release notes: “This release improves the performance of most uses of

deferto incur almost zero overhead compared to calling the deferred function directly. As a result,defercan now be used in performance-critical code without overhead concerns.”

Why this was slow

The performance issues came from what had to happen at runtime.

Each defer meant:

Heap allocation (or at least heap pressure)

Pointer chasing through a linked list

Runtime bookkeeping on function exit

In tight loops, this added up fast.

for i := 0; i < 1_000_000; i++ {

defer f()

}This code wasn’t just slow — it was catastrophically slow. Even when the deferred function was trivial, the overhead of managing defer records dominated execution time.

Note: This is why you’ll still find old Go advice saying “never use

deferin a loop.”

That advice used to be correct.

Why Go kept defer anyway

Given the cost, why didn’t Go remove or redesign defer earlier?

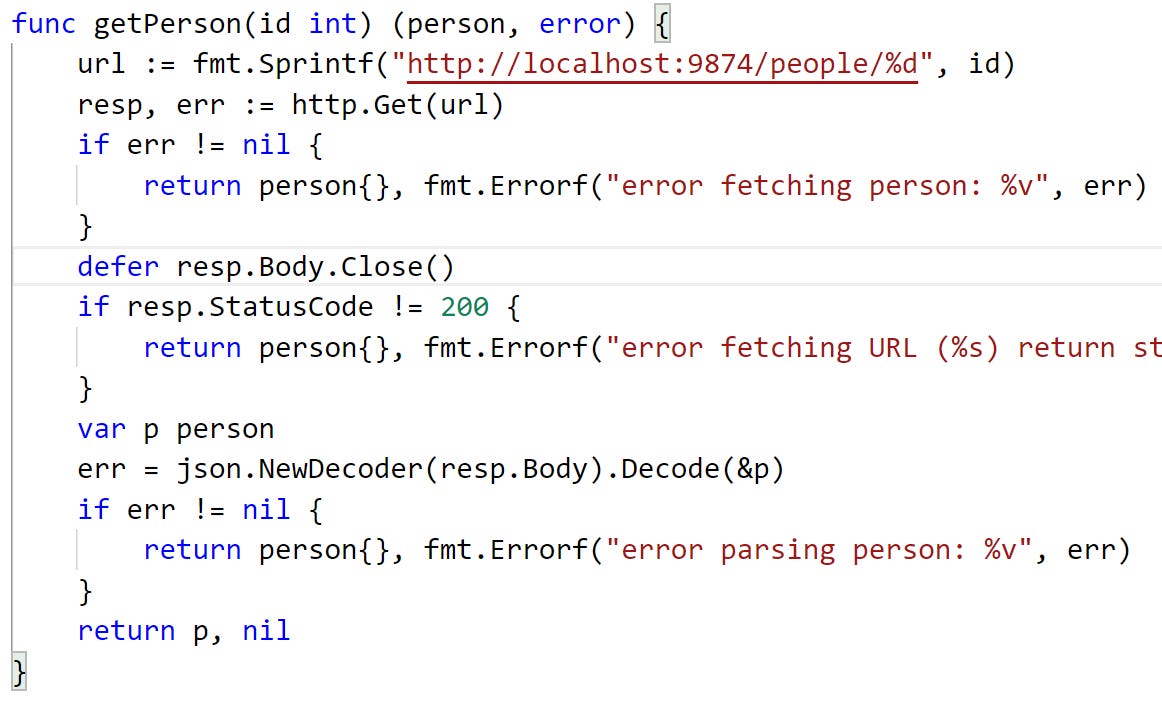

Because defer solves a correctness problem, not a convenience problem.

It guarantees cleanup in the presence of:

Multiple return paths

Early returns

Panics

Manual cleanup logic is easy to get wrong. defer makes the right thing the easy thing — even if it costs a little performance.

Go consistently chooses reliability over micro-optimizations.

What changed in Go 1.14: open-coded defers

In Go 1.14, the compiler introduced open-coded defers.

When the compiler can prove that:

A function has a small, fixed number of

deferstatementsThey are not created conditionally

They do not escape dynamically

…it replaces the runtime defer list with inline cleanup code.

Instead of pushing defer records, the compiler generates something closer to:

func example() {

// normal code

// ...

// epilogue

deferFunc3()

deferFunc2()

deferFunc1()

}No heap allocation. No linked list. No runtime bookkeeping.

For many common cases — especially defer file.Close() — defer became essentially free.

Open-coded defers are a performance optimization for simple

deferstatements that don't involve loops, where the compiler generates inline machine code for the deferred function instead of creating a separate runtime structure (like a heap-allocateddeferstruct). This approach significantly reduces overhead, makingdefernearly a zero-cost abstraction in these common scenarios, as the function and its arguments' locations are known at compile time and managed directly on the stack.

How it works

Optimization. Instead of building a linked list of

defercalls (which involves heap allocations), the compiler recognizes patterns likedefer f()outside loops.Inlining. It directly inserts the cleanup code (e.g.,

f.Close()) near the end of the function’s logic, treating it like a direct function call but ensuring it runs on return.Stack Management. Arguments to the deferred function (like a file handle

f) are kept alive on the stack specifically for the deferred call, ensuring they’re available even if the function panics, as explained in this GitHub issue.FUNCDATA. The compiler emits special

FUNCDATAto track the exact stack locations for the function pointer and arguments, allowing for efficient handling during panics.Performance. Benchmarks show open-coded defers are dramatically faster (e.g., 5.6 ns/op) compared to standard defers (e.g., 35.4 ns/op), approaching the speed of a direct function call (4.4 ns/op).

𝐋𝐞𝐚𝐫𝐧 𝐭𝐨 𝐛𝐮𝐢𝐥𝐝 𝐆𝐢𝐭, 𝐃𝐨𝐜𝐤𝐞𝐫, 𝐑𝐞𝐝𝐢𝐬, 𝐇𝐓𝐓𝐏 𝐬𝐞𝐫𝐯𝐞𝐫𝐬, 𝐚𝐧𝐝 𝐜𝐨𝐦𝐩𝐢𝐥𝐞𝐫𝐬, 𝐟𝐫𝐨𝐦 𝐬𝐜𝐫𝐚𝐭𝐜𝐡. Get 40% OFF CodeCrafters: https://app.codecrafters.io/join?via=the-coding-gopher

Why defer is still sometimes slow

Not all defers can be open-coded.

If a defer:

Appears in a loop

Is conditionally executed

Depends on dynamic values

…the compiler must fall back to the old runtime mechanism.

for _, f := range files {

defer f.Close() // still runtime-managed

}This is why performance-sensitive Go code still avoids defer inside hot loops — not because defer is bad, but because the compiler can’t optimize every case.

What this tells us about Go

The evolution of defer is the perfect case study for Go’s engineering strategy. When defer shipped, the team prioritized safety mechanics over raw performance:

Safety first. It ensured your

mutex.Unlock()always ran, preventing deadlocks, even if it cost extra CPU cycles.Simplicity first. They avoided complex “

try-catch-finally” blocks in favor of a single keyword.Optimization last. For years,

deferwas slow because it required expensive heap allocations. But in Go 1.14, the compiler finally learned how to allocate defers on the stack (open-coded defers).

The Result. The feature became virtually free to use (dropping from ~35ns to ~6ns overhead) without developers having to rewrite a single line of code.

TL;DR for the lazy devs

The defer statement in Go is used to postpone the execution of a function until the surrounding function returns. This mechanism is primarily used for cleanup actions, such as closing files, releasing resources, or unlocking mutexes, ensuring these actions run regardless of how the function exits (e.g., normally, via a return, or due to a panic).

Concepts

Timing of Execution. A deferred function is executed when the function that contains the defer statement finishes.

Argument Evaluation. The arguments to the deferred function are evaluated immediately when the defer statement itself is executed, not when the actual function call runs later.

Stacking (LIFO Order). If multiple defer statements are present in a single function, they are pushed onto a stack and executed in Last-In, First-Out (LIFO) order when the surrounding function returns. The most recently deferred function runs first.

Guaranteed Execution. defer provides a guarantee that cleanup code runs even if an error occurs or a panic is raised within the function, similar to finally blocks in other languages.

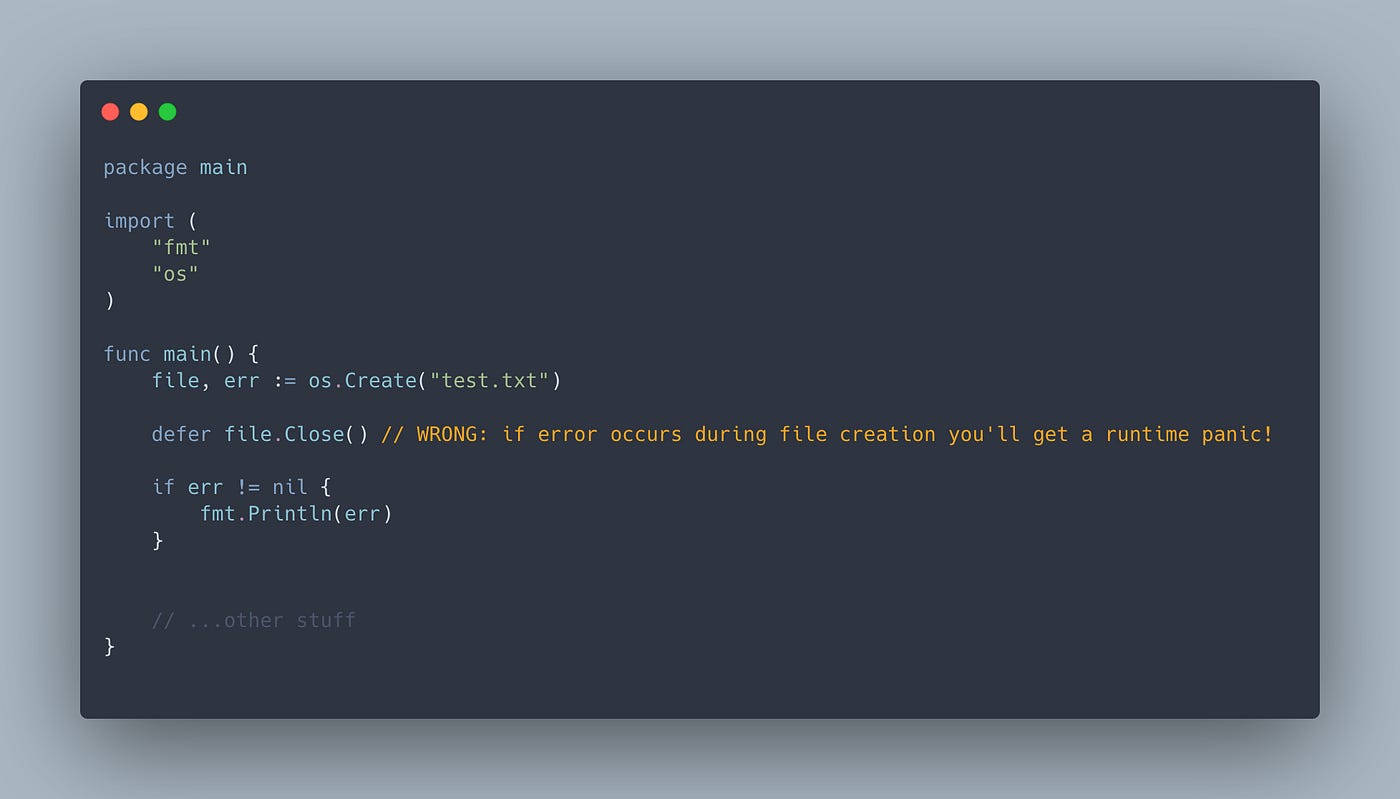

Example Use Case. Resource Cleanup

The most common use of defer is to ensure resource handling, such as closing a file after it’s opened. This keeps the resource allocation and deallocation code near each other, improving readability and preventing resource leaks.

package main

import (

“fmt”

“os”

)

func readFile(filename string) error {

file, err := os.Open(filename)

if err != nil {

return err // Returns early if open fails

}

// Defer the closing of the file right after opening it.

// This ensures file.Close() runs when readFile exits,

// regardless of where the return statement is.

defer file.Close()

// The rest of the function proceeds to read the file

fmt.Printf(”Successfully opened file: %s\n”, filename)

// ... read logic ...

return nil // Returns normally

}Multiple Defers Example (LIFO)

Here is an example demonstrating the LIFO execution order:

package main

import “fmt”

func main() {

fmt.Println(”Counting:”)

for i := 0; i < 3; i++ {

defer fmt.Println(i) // Deferred calls are pushed

// onto a stack

}

fmt.Println(”Done”)

}

// Output:

// Counting:

// Done

// 2

// 1

// 0When main returns, the deferred functions are executed in reverse order of their declaration: fmt.Println(2), then fmt.Println(1), then fmt.Println(0).

Final thought

defer isn’t syntax sugar. It’s a control-flow guarantee backed by runtime machinery. Its historical performance cost wasn’t an accident — it was the price of correctness.

Today, that price is often optimized away.

But knowing why it existed makes you a better Go developer — and helps you recognize when the old rules still apply.

woooow i’m gonna start using it now that i understand