The CAP Theorem

The three pillars of CAP explained with zero cap

The CAP Theorem (also known as Brewer’s Theorem) is a fundamental principle in computer science that helps engineers design distributed systems (systems where data is stored across multiple computers or “nodes”).

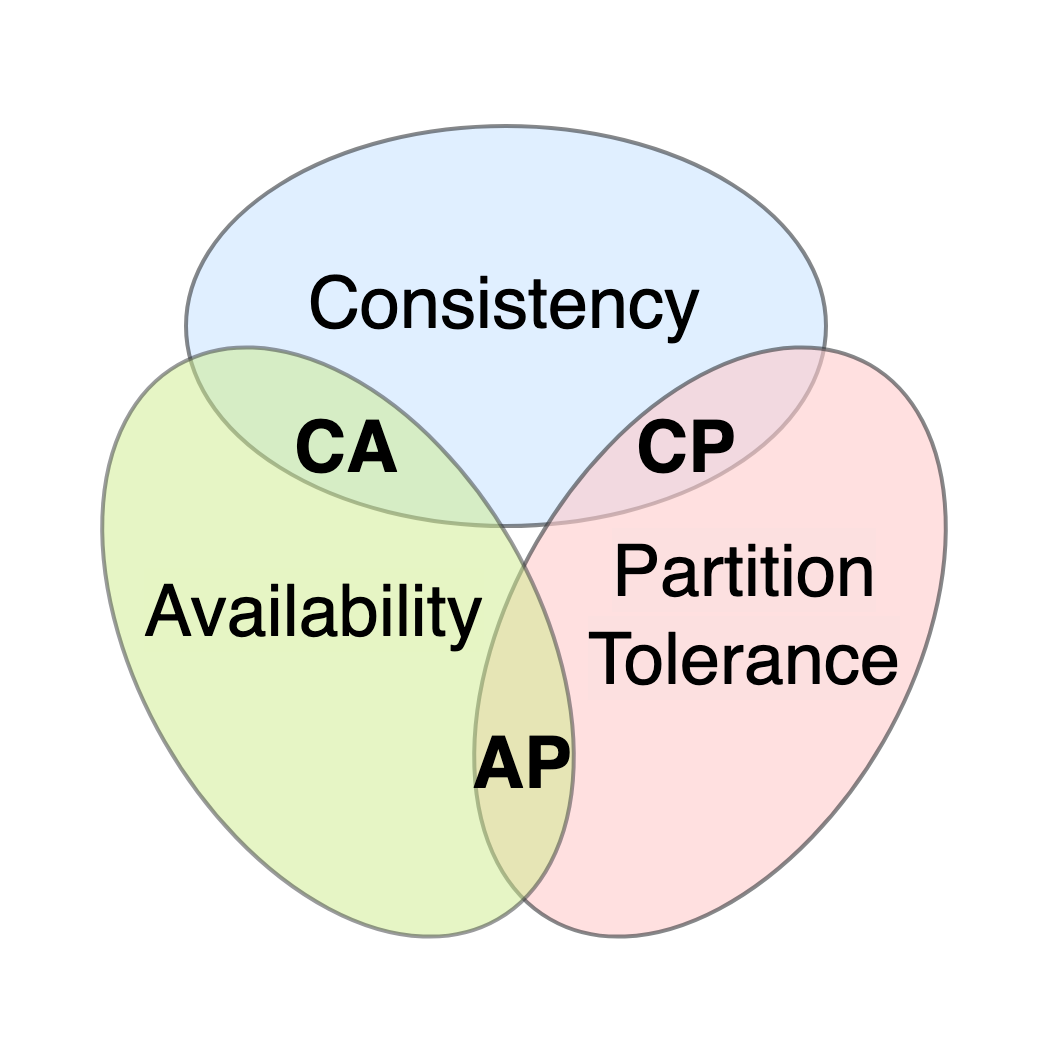

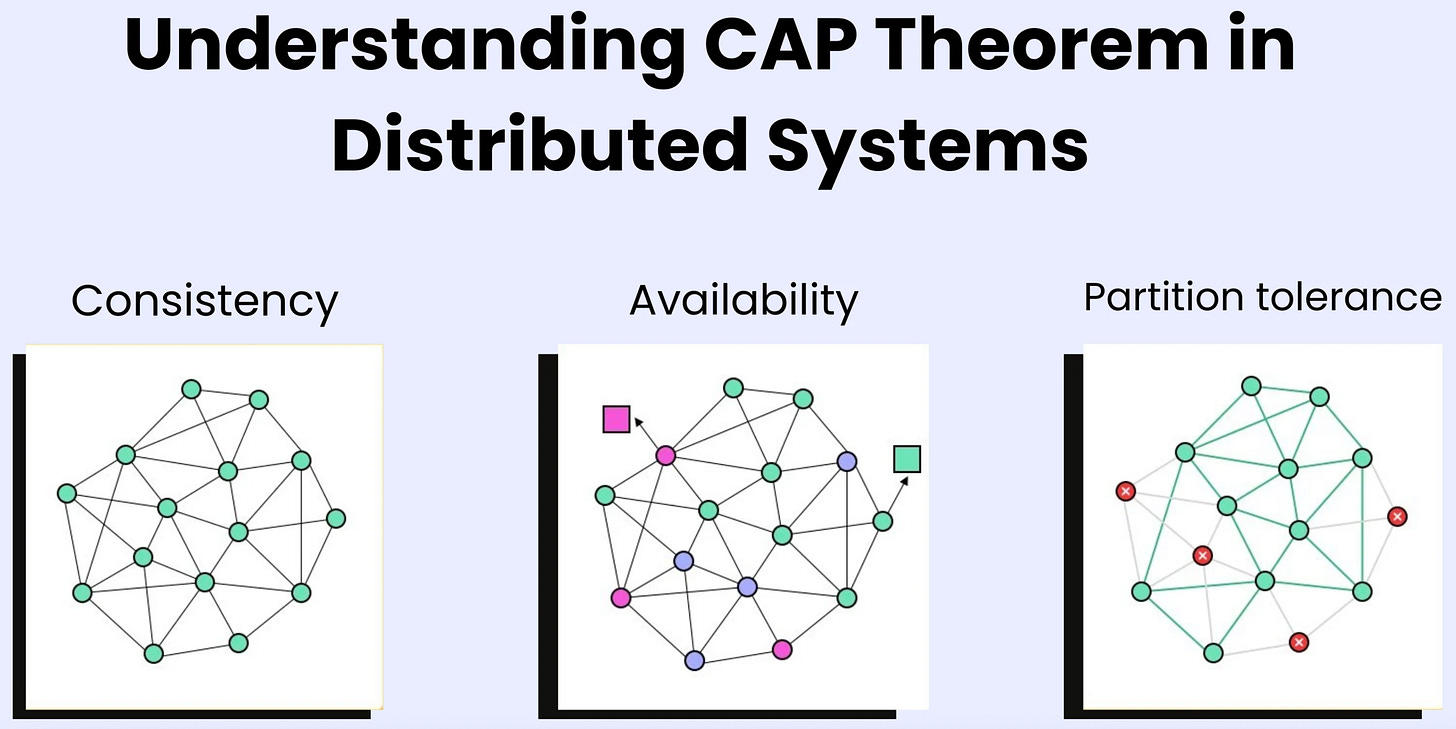

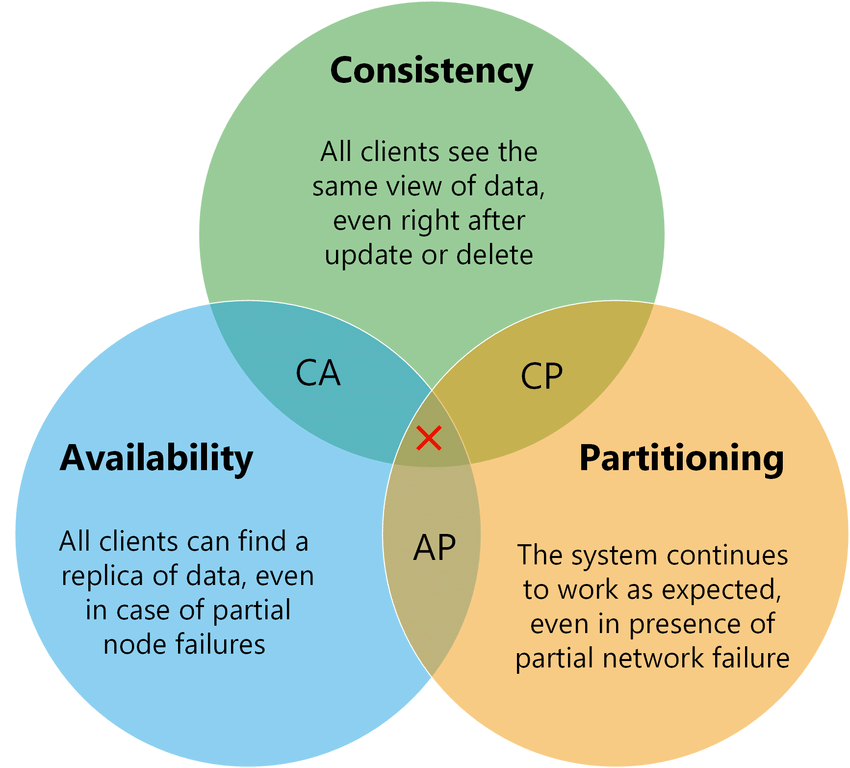

It states that a distributed data store can effectively provide only two of the following three guarantees simultaneously:

1. The Three Guarantees

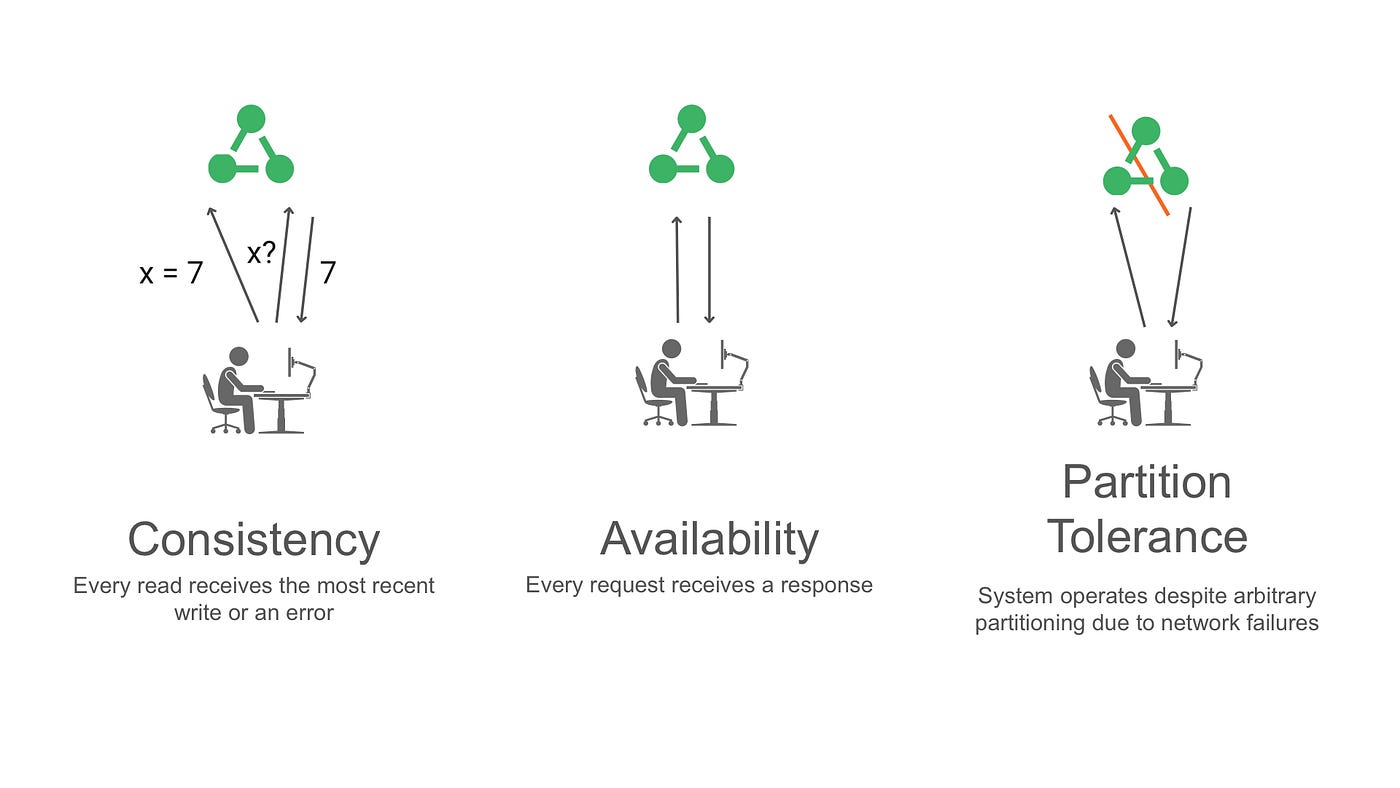

Consistency (C):

Every read receives the most recent write or an error. In other words, all users see the same data at the same time, no matter which node they connect to. If data is updated on Node A, Node B must instantly reflect that update before anyone can read from it.

Availability (A):

Every request receives a (non-error) response, without the guarantee that it contains the most recent write. The system always answers, even if one or more nodes are down or cannot communicate with each other. You might get slightly old data, but you will get data.

Partition Tolerance (P):

The system continues to operate despite an arbitrary number of messages being dropped or delayed by the network between nodes. Essentially, if the connection between Node A and Node B breaks (a “partition”), the system doesn’t crash.

2. The “Pick Two” Trade-off

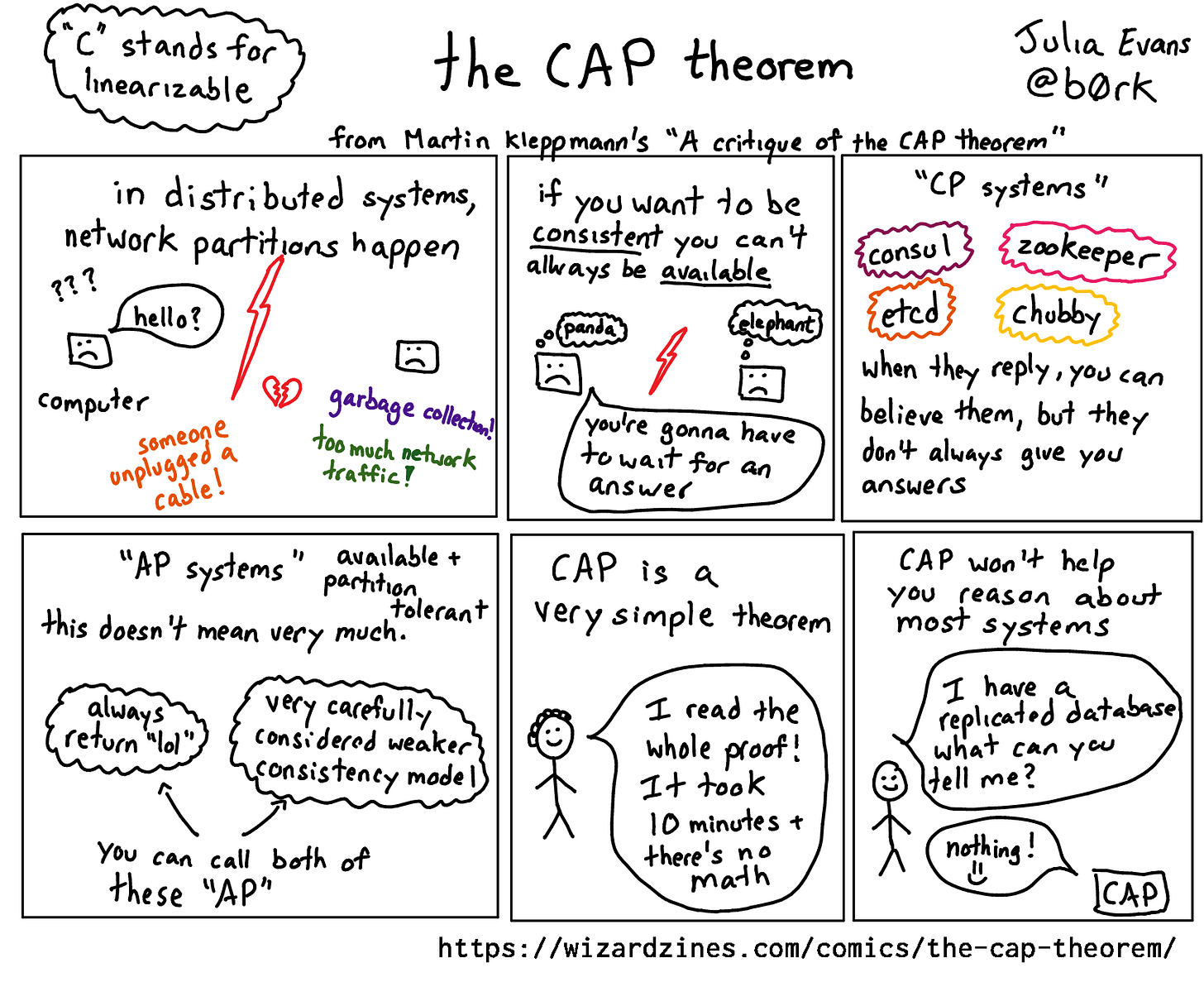

In reality, because networks are unreliable, Partition Tolerance (P) is mandatory for any distributed system. You cannot guarantee that the network will never fail.

Therefore, when a network failure (Partition) occurs, you are left with a binary choice: Cancel the operation (Consistency) or Proceed with potentially stale data (Availability).

𝐋𝐞𝐚𝐫𝐧 𝐭𝐨 𝐛𝐮𝐢𝐥𝐝 𝐆𝐢𝐭, 𝐃𝐨𝐜𝐤𝐞𝐫, 𝐑𝐞𝐝𝐢𝐬, 𝐇𝐓𝐓𝐏 𝐬𝐞𝐫𝐯𝐞𝐫𝐬, 𝐚𝐧𝐝 𝐜𝐨𝐦𝐩𝐢𝐥𝐞𝐫𝐬, 𝐟𝐫𝐨𝐦 𝐬𝐜𝐫𝐚𝐭𝐜𝐡. Get 40% OFF CodeCrafters: https://app.codecrafters.io/join?via=the-coding-gopher

This creates three categories of systems:

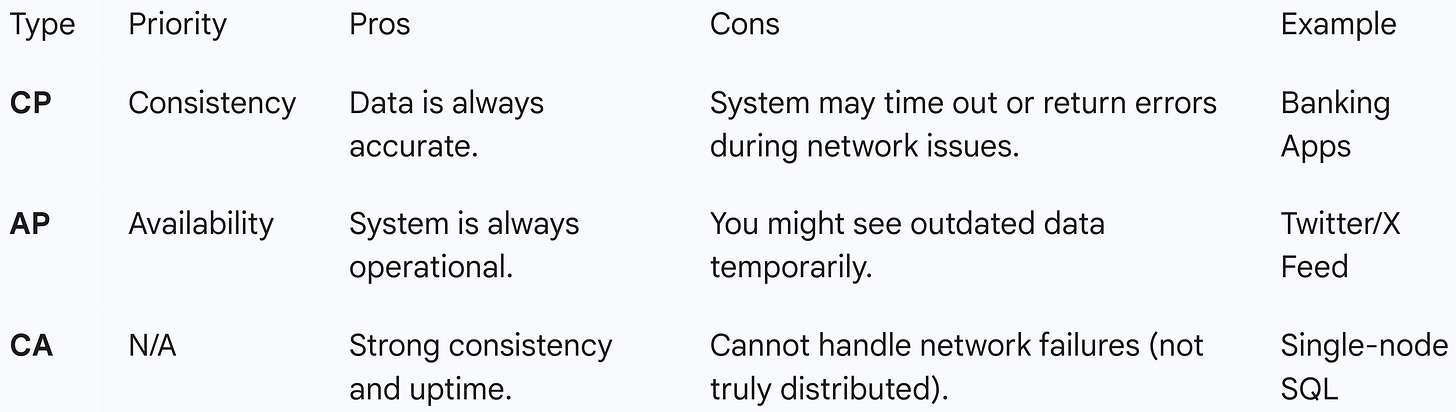

CP (Consistency + Partition Tolerance)

CP systems prioritize data accuracy above all else. When a network partition occurs—meaning the nodes lose contact with one another—the system chooses to shut down non-essential operations rather than risk showing incorrect data. Practically, this means if you try to read or write data during a network glitch, the system will return an error or time out. This architecture is essential for industries like banking or financial services; it is far better for an ATM to temporarily say “Service Unavailable” than to show you a wrong account balance or allow you to withdraw money you don’t actually have. Common examples include MongoDB and Redis.

AP (Availability + Partition Tolerance)

AP systems are designed with the philosophy that the show must go on. When the network breaks, the system keeps accepting reads and writes on all nodes, even if those nodes cannot communicate with each other to sync updates. This guarantees that the user always gets a response (high availability), but that response might be slightly outdated (”stale”). Once the network is restored, the nodes will sync up in the background—a concept known as “eventual consistency.” This is ideal for social media feeds or e-commerce shopping carts, where it is more important to let a user post a status or add an item than to block them just because a server is lagging. Examples include Cassandra and DynamoDB.

CA (Consistency + Availability)

CA systems attempt to provide perfect consistency and perfect availability simultaneously, but this combination comes with a major catch: it implies the system generally cannot tolerate network partitions. Because network failures are inevitable in any distributed system (systems spread across multiple computers), CA essentially only exists in non-distributed, single-node systems. If a CA system is distributed and a network failure does happen, it must fail; it cannot maintain both C and A. Therefore, traditional relational databases like MySQL or PostgreSQL are considered CA systems only when running on a single server, as they prioritize accurate data and uptime but lack built-in mechanisms to handle network separation across a cluster gracefully.