Understanding Out-of-Order Execution

How Modern CPUs Execute More Instructions Than You Think

“Performance comes from letting fast hardware run ahead of slow dependencies.”

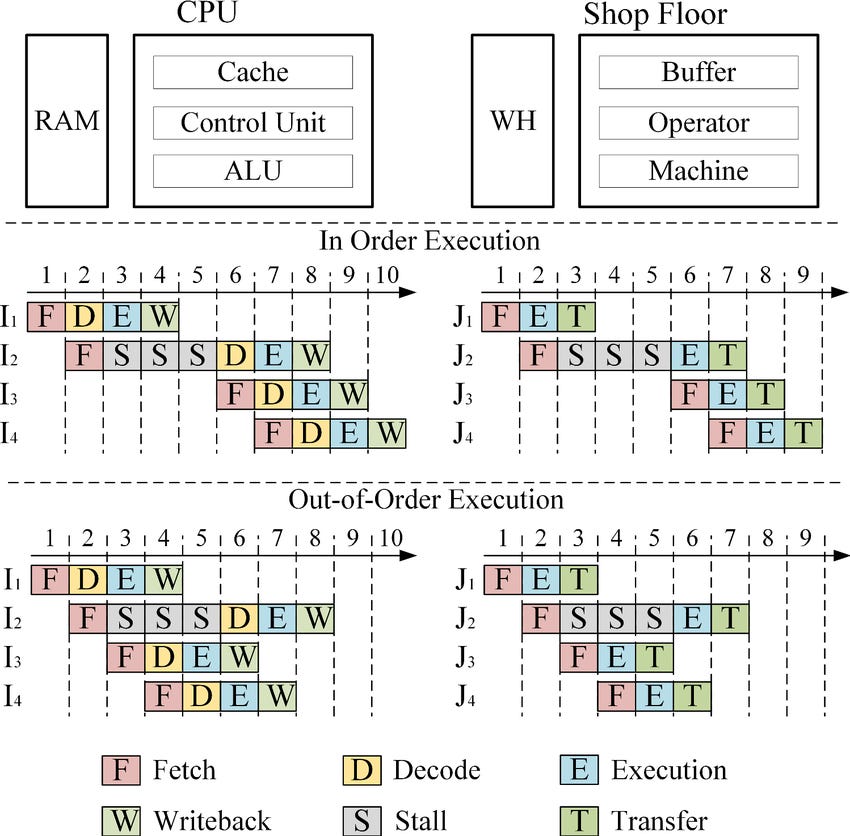

Out-of-order execution is one of the crown jewels of modern CPU design. It is a technique that allows a processor to rearrange the order of instruction execution internally to keep its functional units busy, reduce stalls, and extract massive amounts of parallelism (even from code that looks strictly sequential).

At a high level, the CPU is no longer a strict left-to-right executor. Instead, it becomes a scheduler: it analyzes which instructions are blocked, which are ready, and which can run in parallel. The end result is that instructions may execute out of order, but they must retire (commit results) in order, preserving program correctness.

Why Out-of-Order Execution Exists

Modern CPUs are deeply pipelined, superscalar, and extremely fast. But memory accesses, data dependencies, and branch instructions regularly slow things down. If the CPU were forced to wait every time an instruction stalled, pipelines would empty and throughput would collapse.

Out-of-order execution solves this by allowing the CPU to:

Skip instructions waiting on memory

Execute independent instructions ahead of time

Reorganize work so no execution unit sits idle

Hide latency (especially memory latency)

This transforms the CPU into a high-performance parallel engine—even when the software looks sequential.

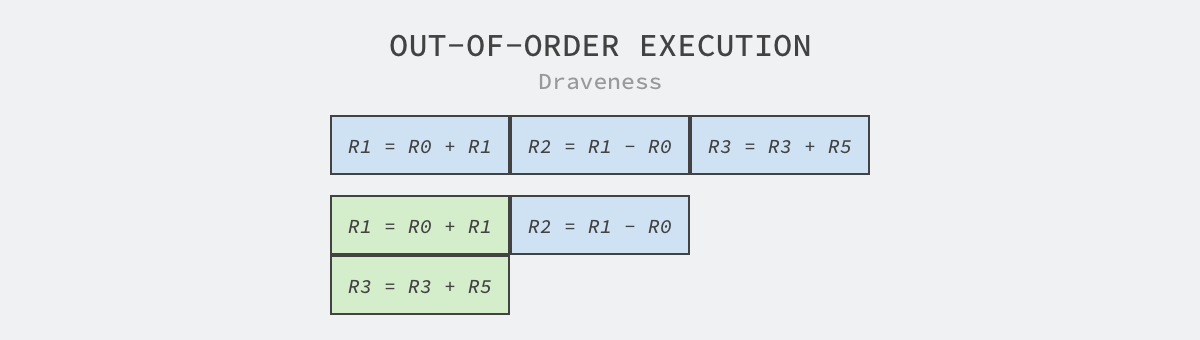

The Core Idea

Instead of executing instructions strictly in program order:

Fetch/decode still happen in order.

The CPU analyzes instructions and identifies dependencies.

Instructions whose inputs are ready are sent to execution units as soon as possible, even if earlier instructions are stalled.

Results are held in temporary structures until it is safe to retire them in correct program order.

Execution becomes fluid, dynamic, and heavily optimized.

The Hardware Structures That Make It Possible

Out-of-order execution relies on several specialized hardware components.

1. Reservation Stations

These are holding areas where decoded instructions wait until their operands are ready.

Each station tracks:

instruction type

source operands (or where they will come from)

destination register

execution readiness

As soon as all operands arrive, a reservation station dispatches the instruction to the appropriate execution unit (ALU, FPU, load/store unit, etc.).

2. Register Renaming

One of the biggest performance killers is the false dependency—two instructions that appear to depend on the same register but logically do not.

False dependencies, also known as name dependencies, are conflicts in a CPU’s execution pipeline that arise from the reuse of the same storage location (like a register name) by different instructions, even though there is no true flow of data between those instructions. They are “false” because they do not reflect the actual logic or data requirements of the program and can be resolved by the hardware or compiler without changing the program’s intended outcome.

The Example. Imagine we are baking two different cakes (Calculation A and Calculation B), but we only have one mixing bowl (R1).

1. ADD R1, R2, R3 ; R1 = 10 + 20 (Result is 30)

2. STORE R1, [MemA] ; Save 30 to memory

...

3. ADD R1, R4, R5 ; R1 = 50 + 50 (Result is 100)

4. STORE R1, [MemB] ; Save 100 to memoryThe Problem (Without Renaming). The CPU looks at Line 3 and panics. It thinks, “I can’t touch R1 yet! Line 2 is still trying to read it to save it to memory!” The CPU stalls Line 3, waiting for Line 2 to finish.

The Solution (With Renaming). The CPU realizes that the R1 in Line 3 is totally different from the R1 in Line 1. It secretly maps them to different physical registers inside the chip.

It rewrites the instructions internally like this:

1. ADD PhysA, R2, R3 ; Write to Physical Register A

2. STORE PhysA, [MemA] ; Read from Physical Register A

...

3. ADD PhysB, R4, R5 ; Write to Physical Register B (No waiting!)

4. STORE PhysB, [MemB] ; Read from Physical Register B

Now, Line 3 can execute simultaneously with Line 2. The dependency was “false” because it was just about the name R1, not the actual data.

Register renaming solves this by mapping architectural registers to a larger pool of physical registers, eliminating false dependencies and enabling parallel execution.

How it works

Logical vs. Physical. Programs use logical (architectural) registers. The processor has many more physical registers (often hidden from the programmer).

Mapping. A “rename table” tracks which physical register holds the current value for each logical register.

Renaming. When an instruction needs to write to a logical register (e.g.,

R1), the processor assigns it an available physical register (e.g.,P100) and updates the rename table.Dependency Removal. If another instruction later writes to

R1, it gets a new physical register (e.g.,P101), even though the program still calls itR1. This separates the writes, preventing unnecessary waiting.True Dependencies Maintained. True data dependencies (Read-After-Write, RAW) are preserved by tracking which physical registers hold ready data, allowing out-of-order execution when possible.

3. Reorder Buffer (ROB)

The ROB is essential. It:

maintains program order

holds results of out-of-order execution

ensures the state of the register file updates in order

If an instruction completes, its result sits in the ROB until all earlier instructions have retired. Only then is the result committed.

This is what maintains correctness despite chaotic execution ordering.

In out-of-order execution, retirement (or graduation) is the final stage where an instruction’s results are made permanently visible and resources (like registers) are freed, but critically, it happens in the original program order, even though execution happened out-of-order, ensuring correct program behavior. It’s the process of “committing” results to the architectural state, after all older instructions have also retired, using structures like the Reorder Buffer (ROB) to manage this sequential completion, which is vital for handling exceptions and maintaining correctness.

4. Load/Store Queue

Memory operations are tricky because loads can depend on earlier stores.

The load/store queue tracks all in-flight memory instructions to ensure:

loads get the correct value

out-of-order memory operations don’t violate correctness

The CPU may even forward values from un-committed stores to subsequent loads.

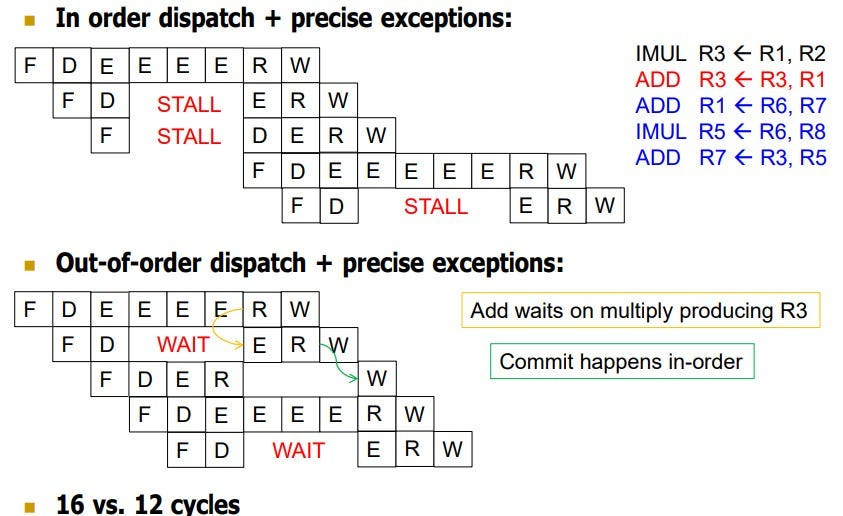

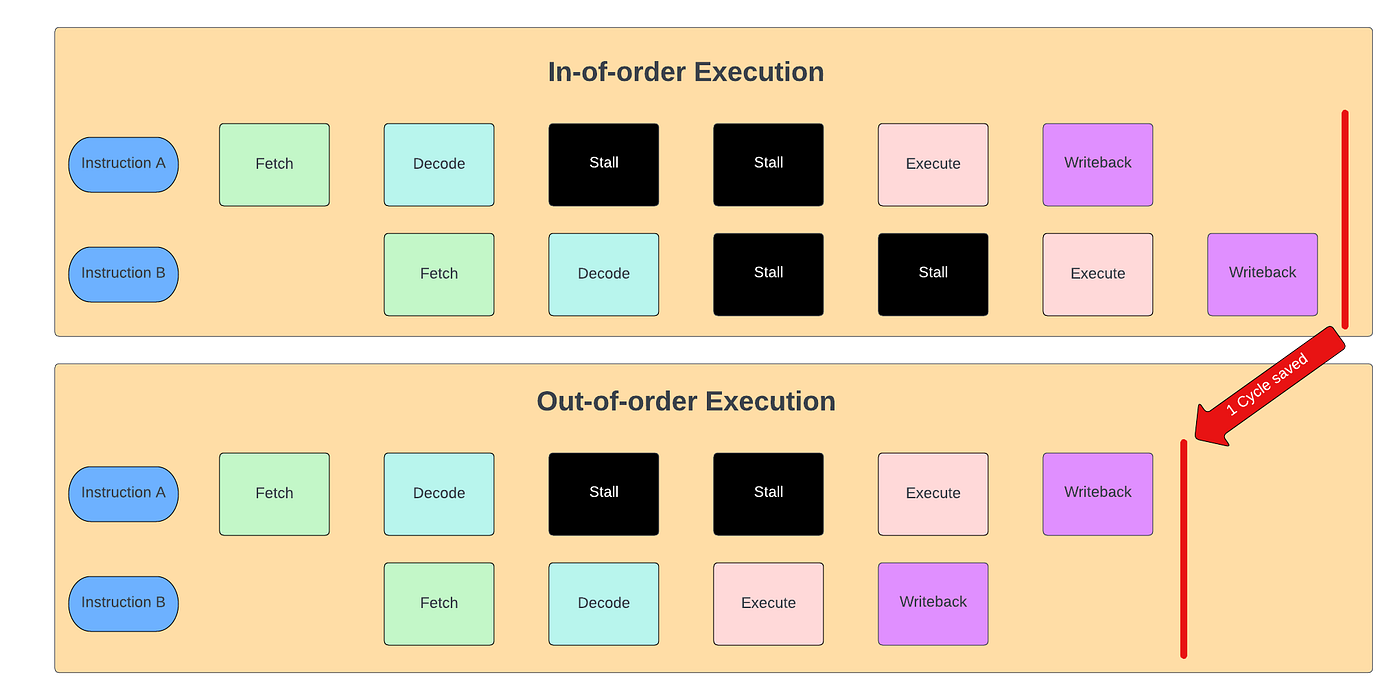

An Example. Why It Helps

Consider:

1. x = load [A] // Memory stall: slow

2. y = x + 1 // Depends on #1

3. z = 5 + 7 // Independent

4. w = z * 2 // Depends on #3In an in-order CPU:

Instruction 1 stalls

Instructions 2, 3, 4 all wait

In an out-of-order CPU:

Instruction 1 stalls (waiting on memory)

Instruction 2 is blocked (depends on x)

Instruction 3 runs immediately

Instruction 4 runs after 3

The pipeline stays full

The CPU hides the memory latency by executing everything that can run ahead of the stall.

Speculative Execution. The Perfect Partner

Out-of-order execution works hand-in-hand with:

branch prediction

speculative execution

The CPU predicts which branch will be taken, speculatively issues instructions past the branch, and executes them out of order. If prediction was correct, great—latency hidden. If wrong, speculative results are discarded.

Speculative + out-of-order execution is why modern CPUs are fast, but it’s also why vulnerabilities like Spectre became possible: speculation leaves traces in cache and timing behavior.

Performance Benefits

Hides memory latency

The biggest win. Memory is slow; CPU is too fast to wait.Maximizes functional unit utilization

Keeps ALUs, FPUs, load units, and branch units busy simultaneously.Enables instruction-level parallelism

Even single-threaded programs run with enormous parallelism under the hood.Reduces stalls from data hazards

True dependencies are unavoidable, but false ones are eliminated through register renaming.Improves overall throughput

Many instructions finish sooner, even if they retire in order.

Why Retirement Still Happens In Order

Out-of-order execution would be unsafe without in-order retirement.

Retirement ensures:

precise exceptions

correct program state

rollback on mispredicts

no partial updates to registers or memory

Thus, the CPU executes in whatever order maximizes performance—but commits results in strict program order.

Conclusion

Out-of-order execution is one of the most advanced techniques in CPU architecture. It turns the CPU into a dynamic, latency-hiding, highly parallel engine that rearranges work internally for maximal speed—without the programmer ever needing to know.

The impact is enormous: even simple scalar code benefits from parallelism, stalls are largely hidden, and execution units operate at full capacity whenever possible.

It is one of the reasons modern CPUs achieve astonishing performance despite the inherent slowness of memory and the sequential semantics of software.

At this point I have to ask: Will there be a book? I wish I could download these articles to my ebook reader.

veryyy interestinggg